I’ve been meaning to spend some more time to create a multi agent workflow in AI Foundry that showcased the capabilities as well as demonstrate a flow that did not have to be a simple start to finish design. My first attempt, which I haven’t gotten back to, was allowing a user to leverage an MCP server provided by a Logic App but that workflow sort of got held up by my inability to determine how to remove the approval requirement for when the agent called on the tool (I still need to get to that). What I ended up deciding on over an evening was to try and create a workflow that allowed a user to select an LLM model amongst the options available, receive an answer to their question, receive suggestions on what they could ask in relation to their question. Those who are familiar with CoPilot will recognize the recommendations effectively mimics what M365 CoPilot does. To itemize the process, this workflow will:

- Presents the user the option of selecting an available LLM model via text

- Allow a user to interactively ask a question

- Receive the answer to the question

- Receive recommendations on follow up questions

- Loop back to allowing to ask a question

Using this type workflow allows me to demonstrate how to incorporate multiple agents powered by different LLM models, storing input and output in variables, and looping the workflow while maintaining context. I hope the following demonstration will showcase just how powerful AI Foundry can be at its current state. I have to say that there were some nuances that I encountered where I wished I could do something that I couldn’t but Microsoft is always improving their tools and I can only see improvements being made available as soon as I hit the publish button for this post.

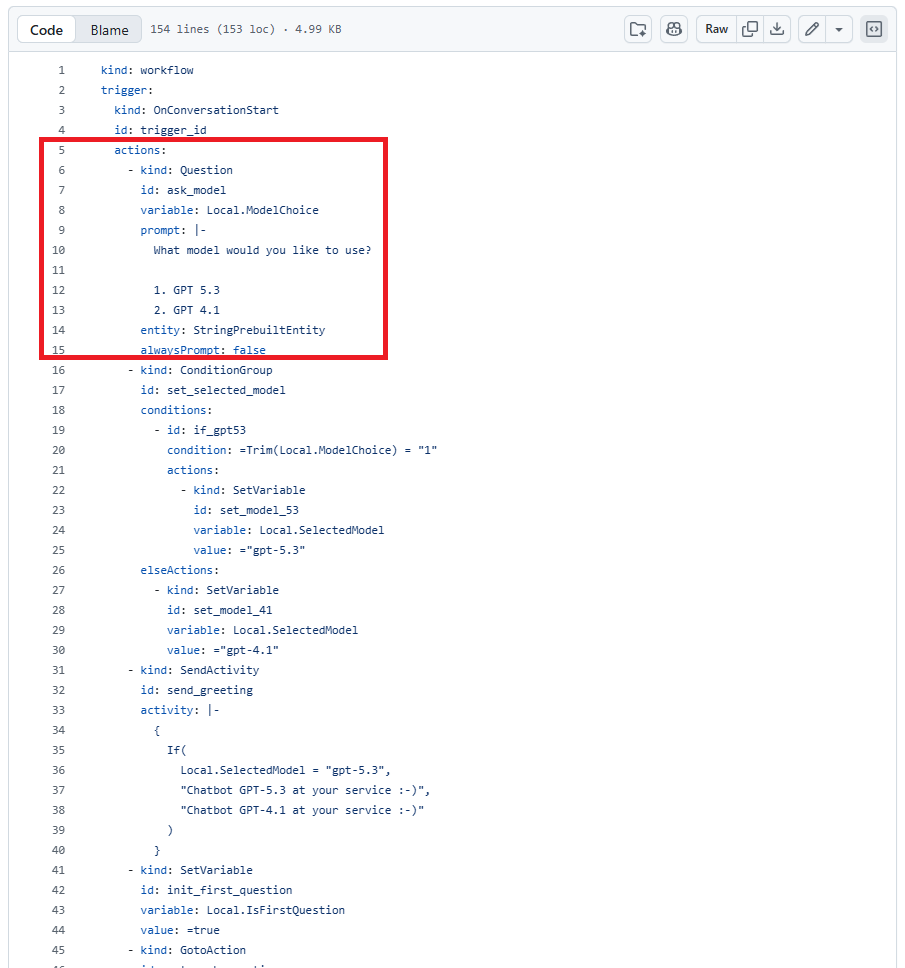

The Complete Workflow YAML

Let’s start by looking at the full workflow configuration here in my GitHub repo: https://github.com/terenceluk/Azure/blob/main/AI%20Foundry/Workflows/Chatbot-Model-Selection.yaml

The Flow Architecture

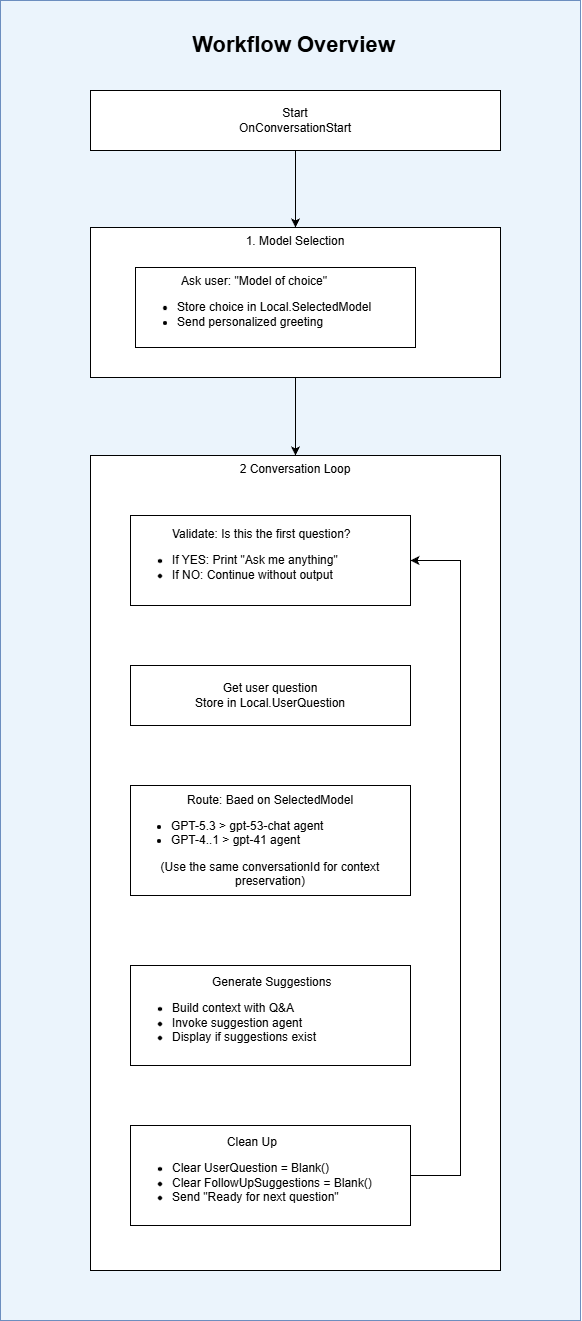

Before we dive into the step-by-step configuration, let’s recap what this workflow accomplishes:

- Model Selection: When a conversation starts, users choose between GPT-5.3 and GPT-4.1

- Conversation Loop: The workflow continuously accepts questions and routes them to the selected model

- Follow-up Suggestions: After each answer, a specialized agent generates contextual follow-up questions

- Context Preservation: The workflow maintains conversation history so follow-up questions can reference previous exchanges

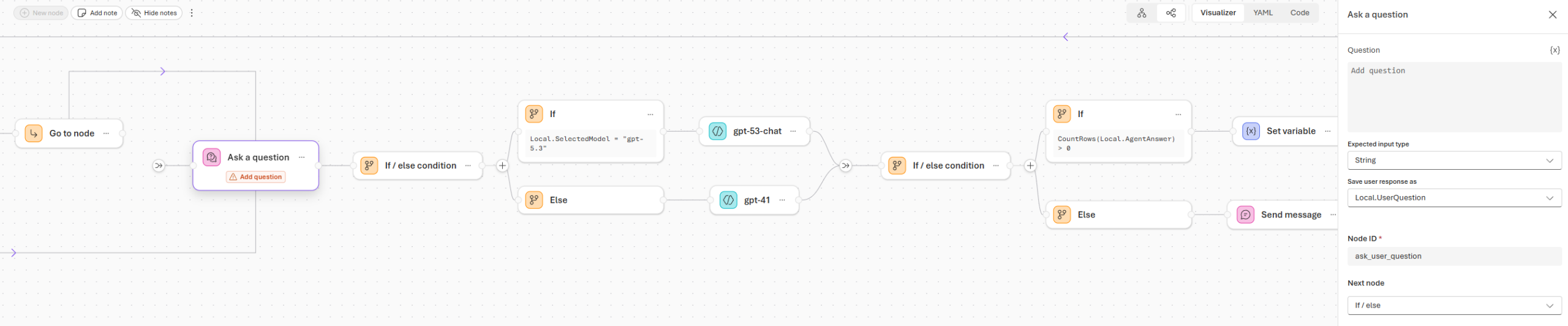

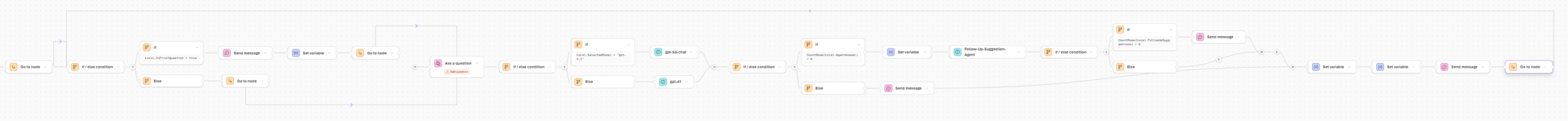

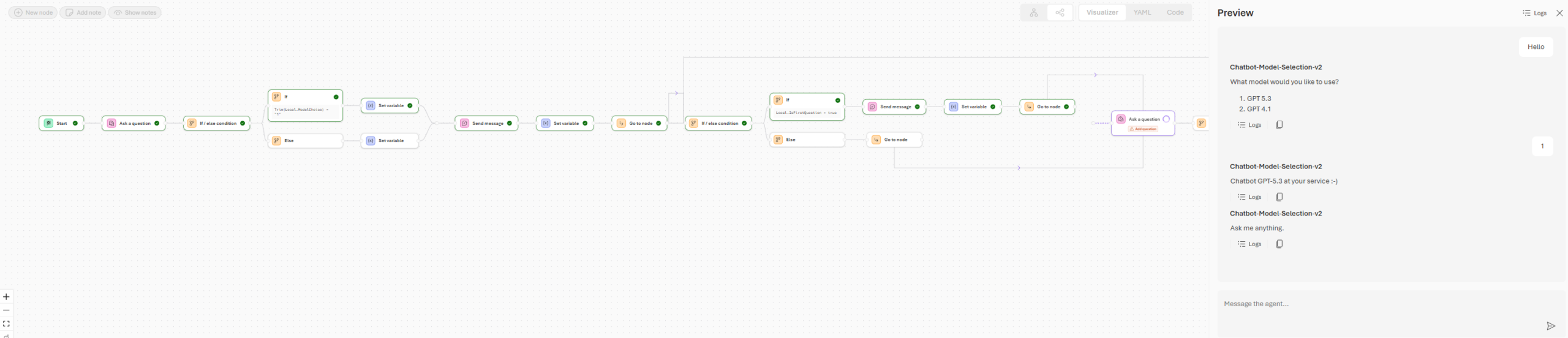

Here’s a high-level overview of the entire flow:

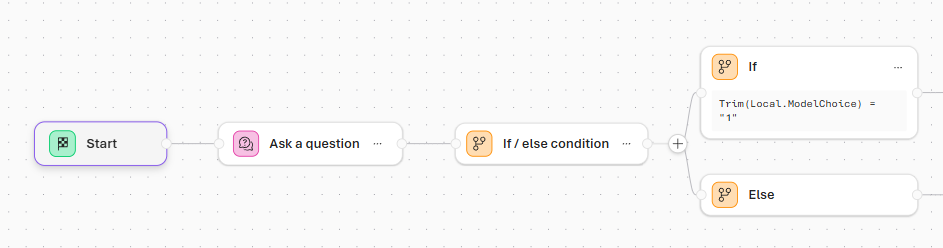

AI Foundry Workflow Visualizer

The following is the full workflow in the AI Foundry visualizer:

Workflow Configuration

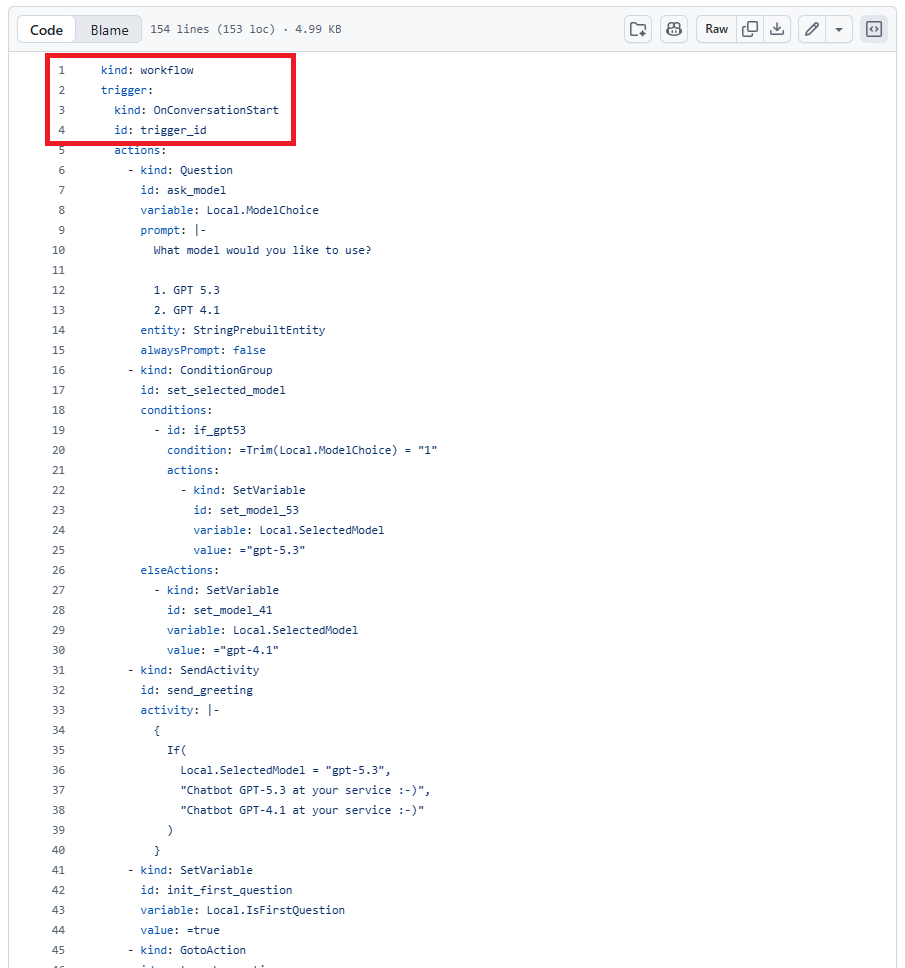

Step 1: Workflow Trigger

Start by creating a new workflow and selecting the OnConversationStart trigger. This ensures the workflow activates immediately when a user initiates a conversation.

The trigger configuration is straightforward:

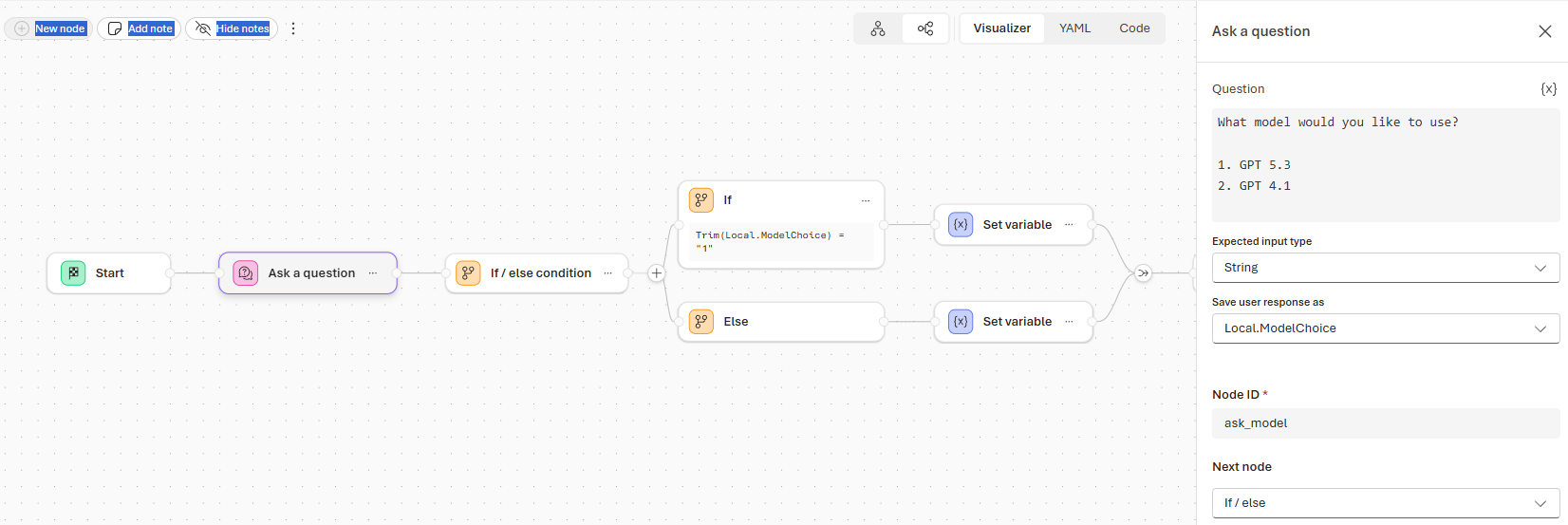

Step 2: Model Selection Question

The first action presents users with a choice of which AI model they’d like to use.

The response is stored in the Local.ModelChoice variable, which will be used in the next process.

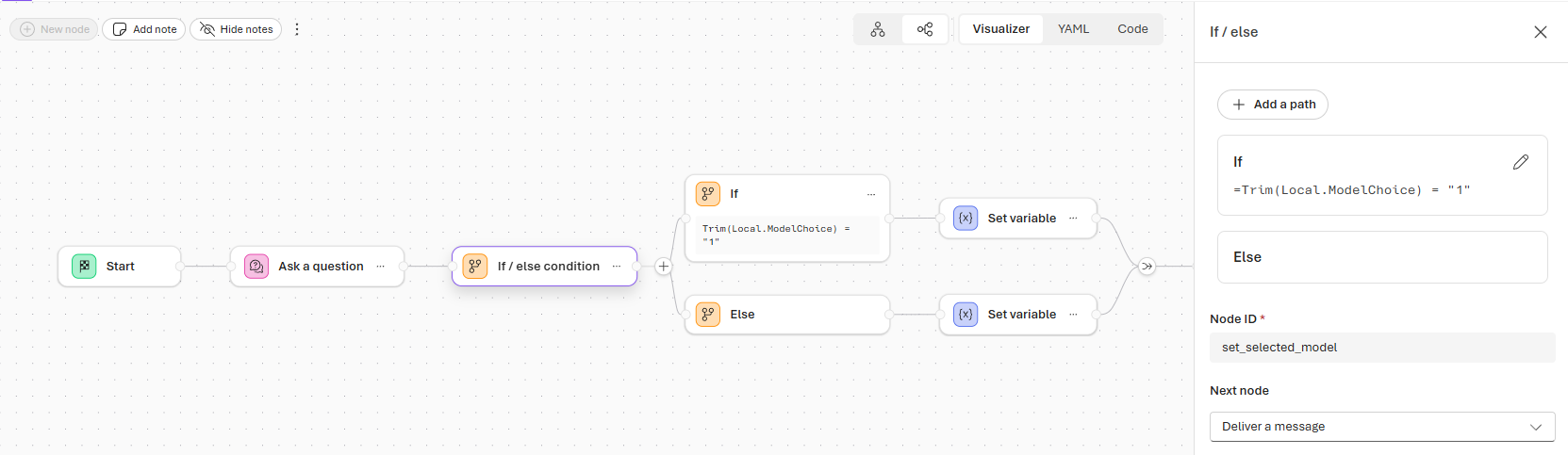

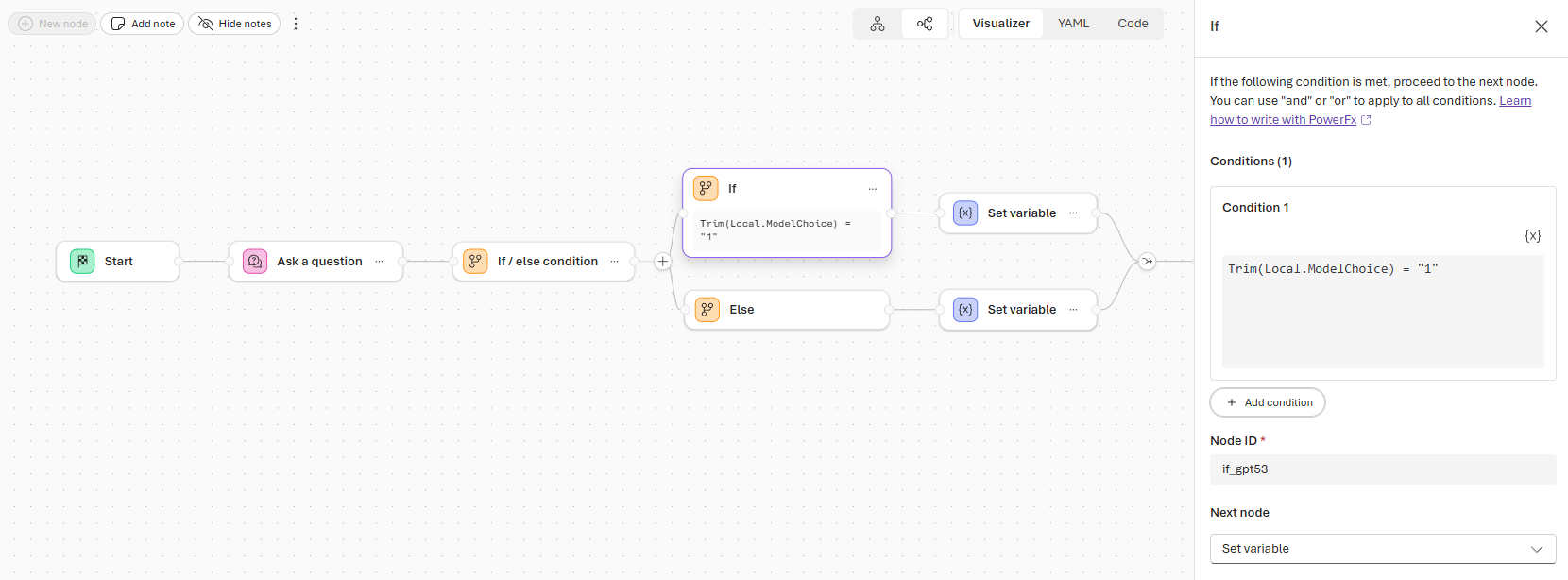

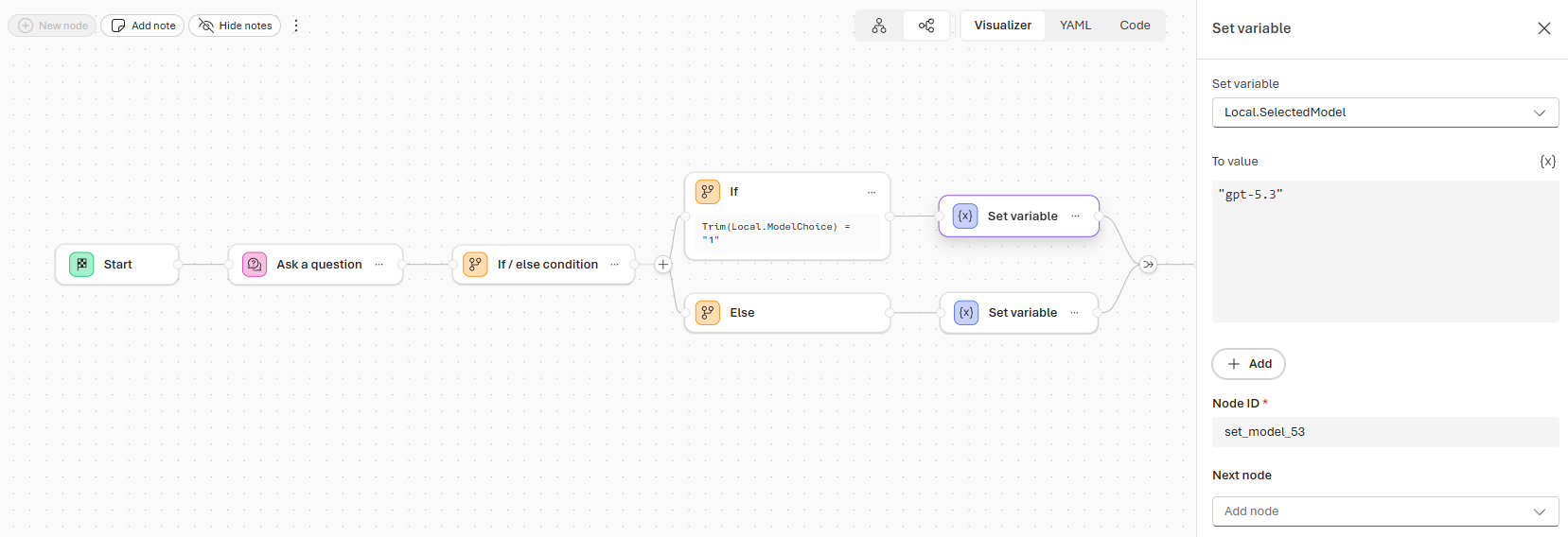

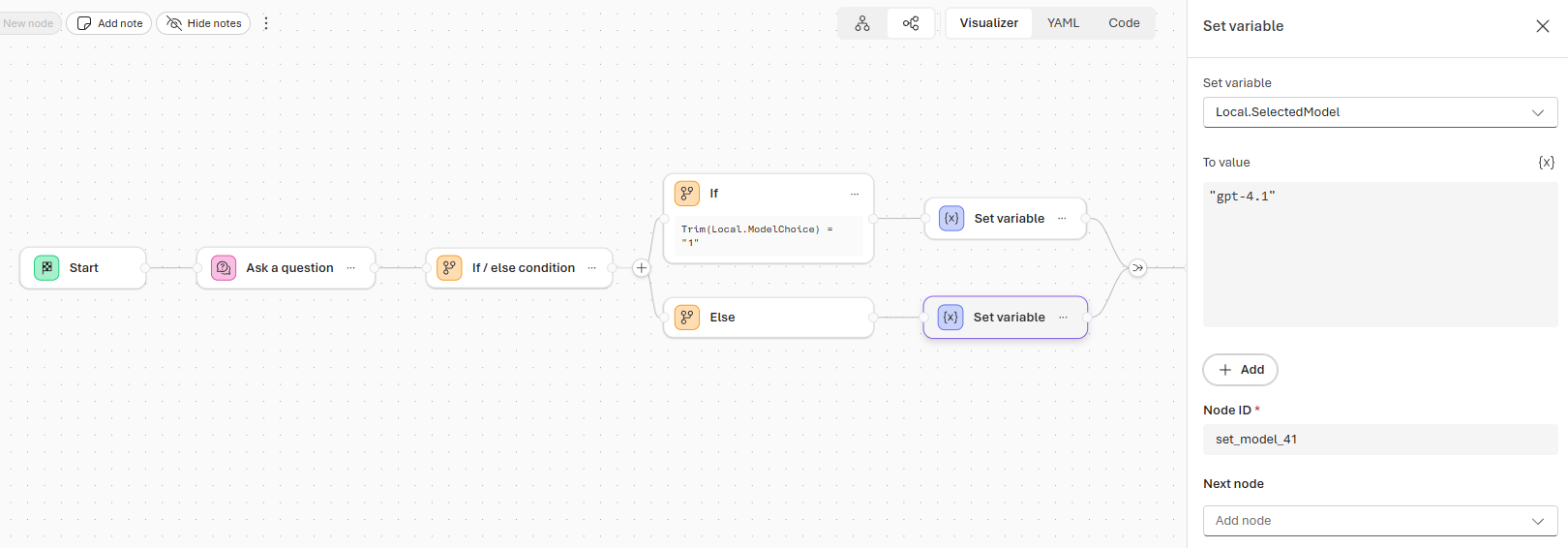

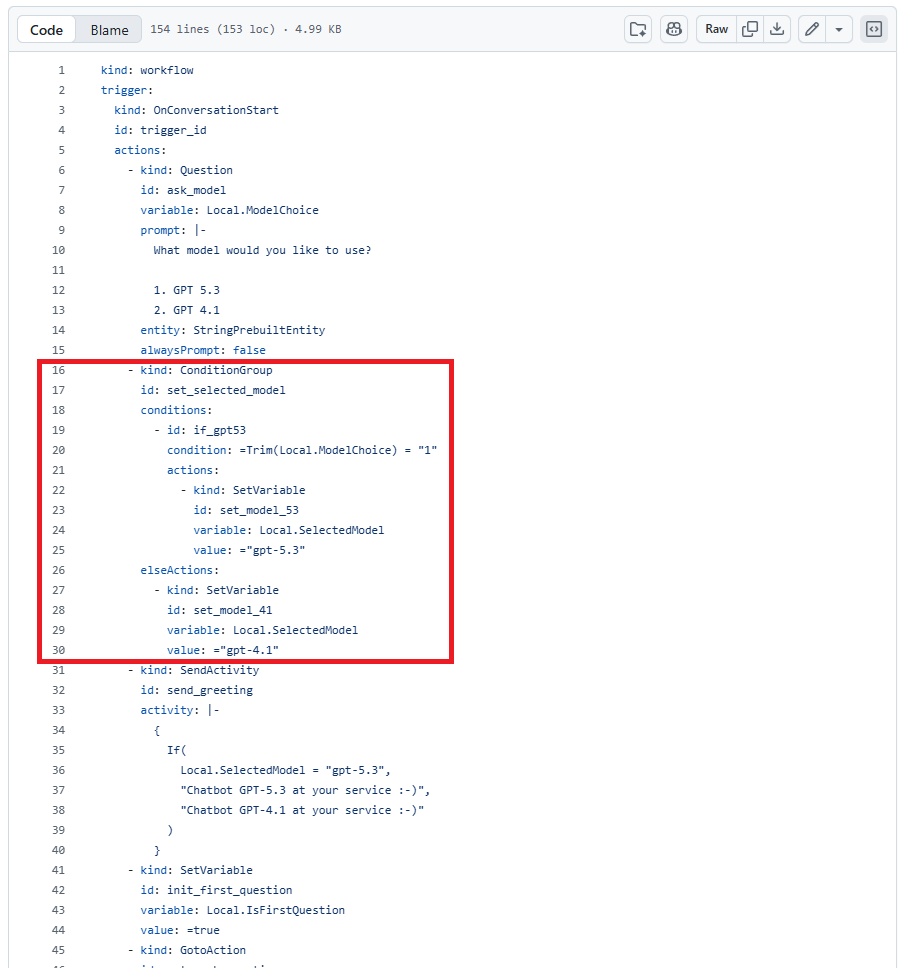

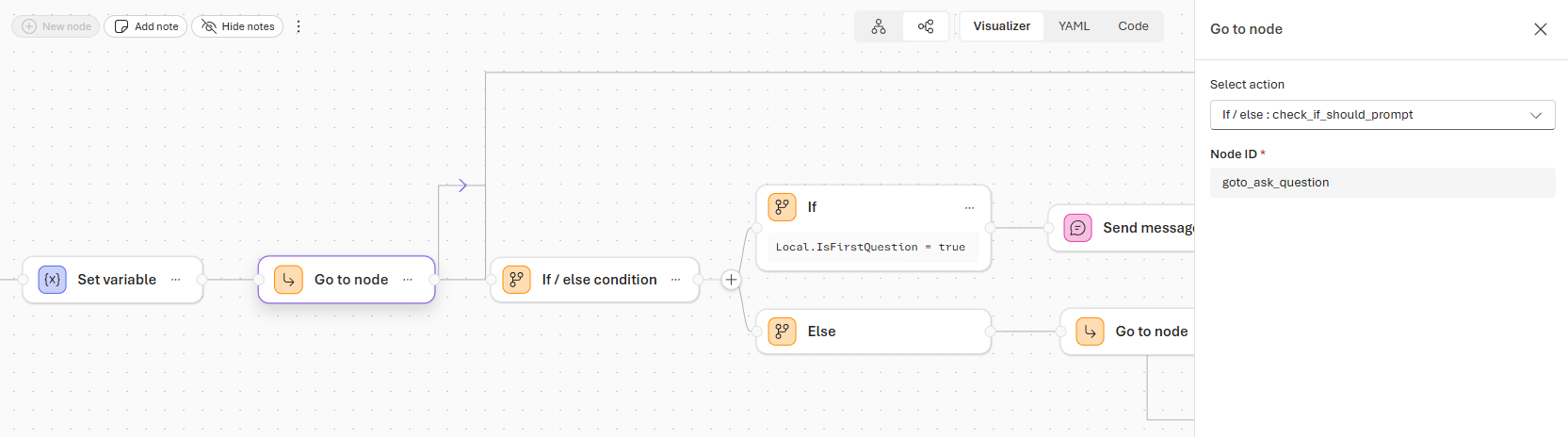

Step 3: Set the selected model based on the user’s choice

This condition group translates the user’s numeric choice into an actual model identifier:

The Trim() function handles any accidental spaces in the user’s input, which will then be stored in the Local.SelectedModel variable that persists for the entire conversation.

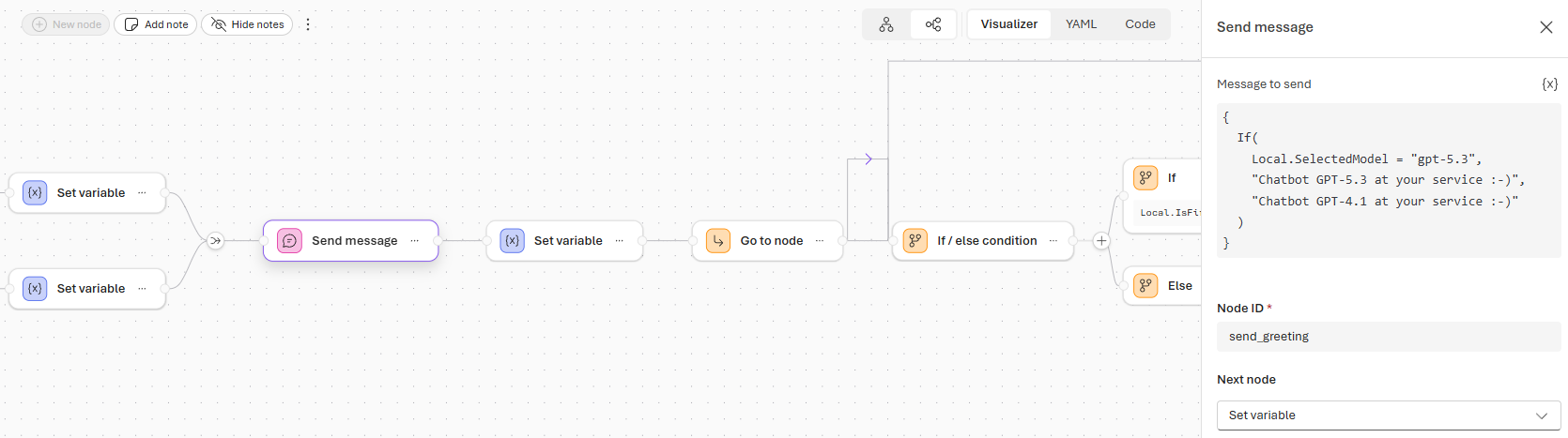

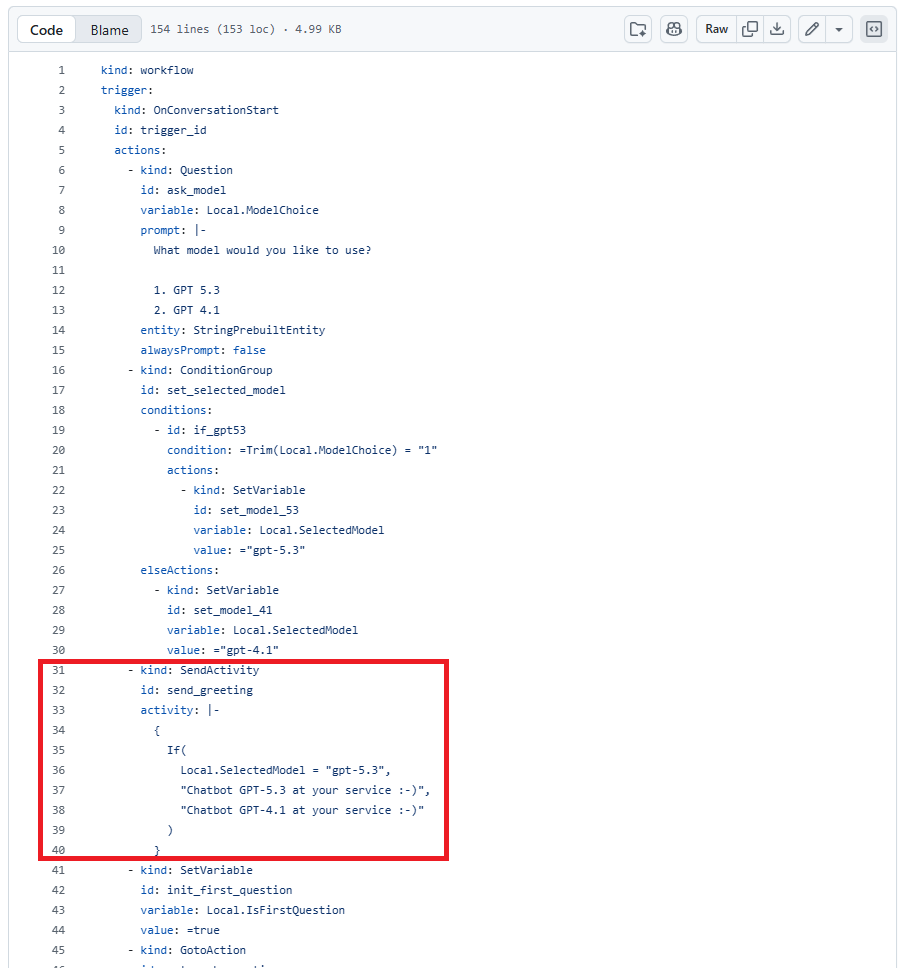

Step 4: Send a personalized greeting based on LLM model

Using the Power Fx If() function, a personalized greeting based on the selected model will be sent:

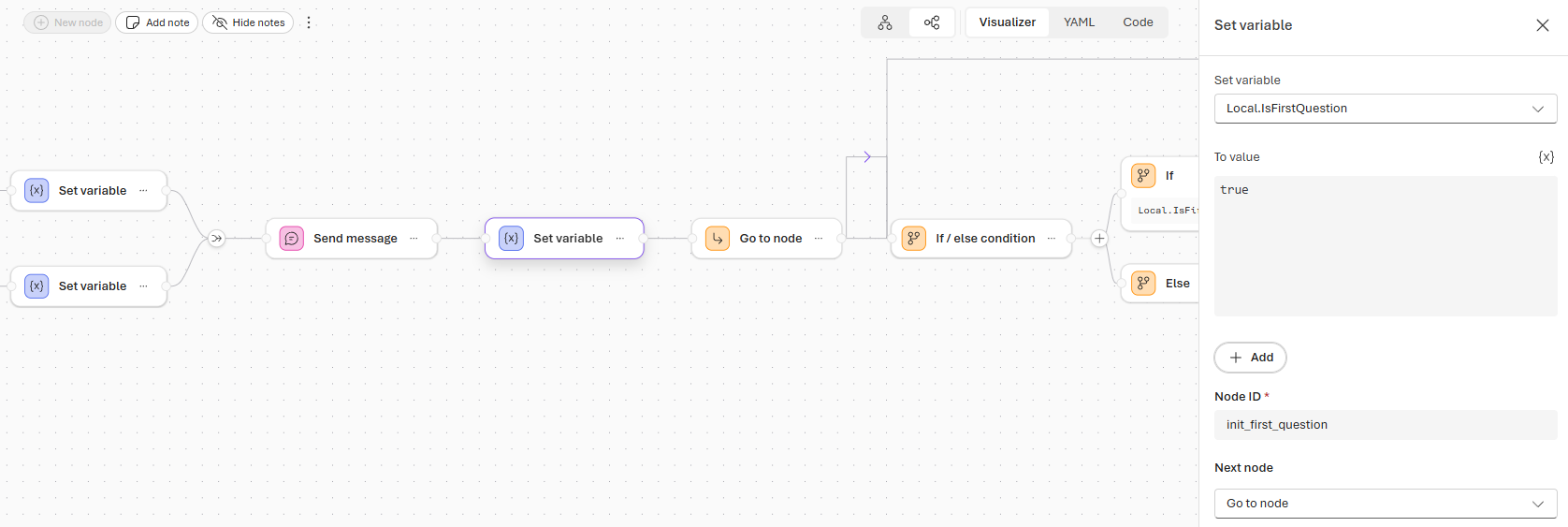

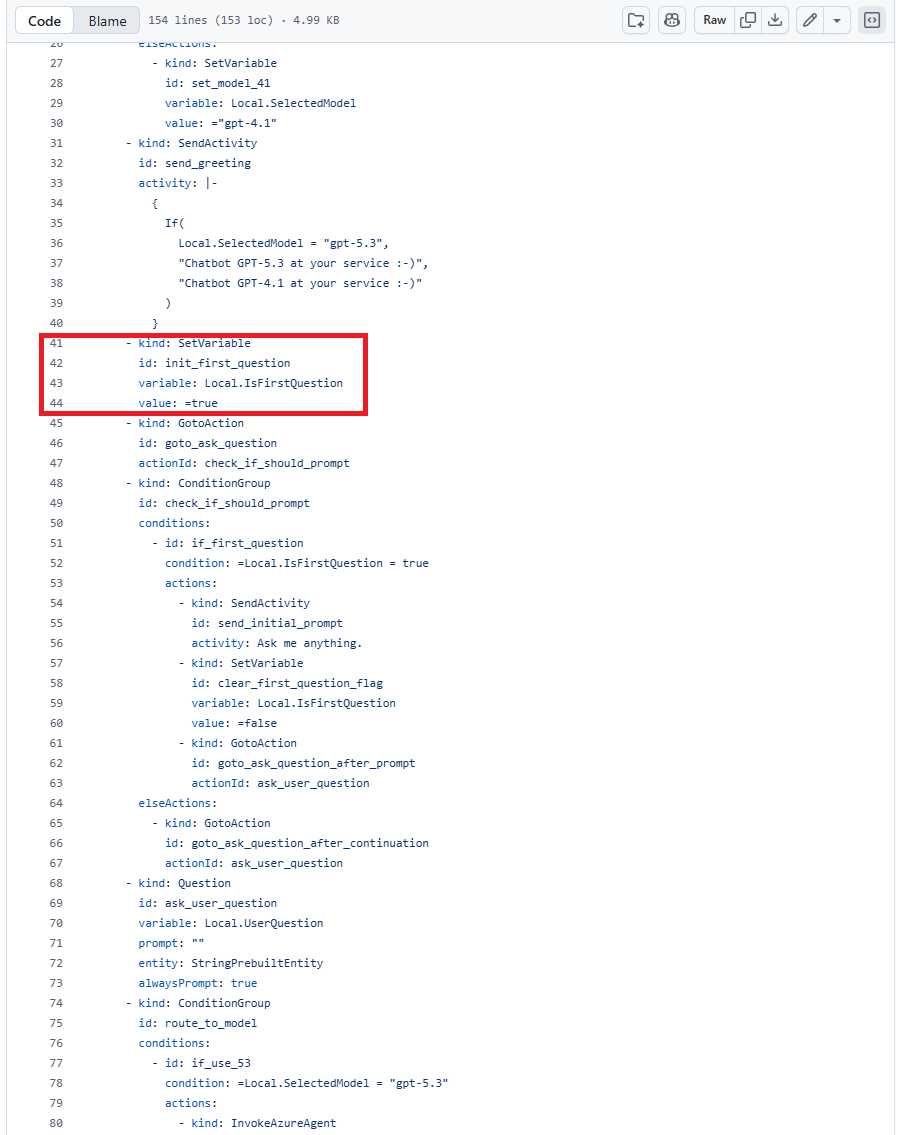

Step 5: Initialize the first question flag

We’ll be storing the value true to indicate that this is the first question from the user so the message: “Ask me anything.” can be sent:

Step 6: Create the main conversation loop

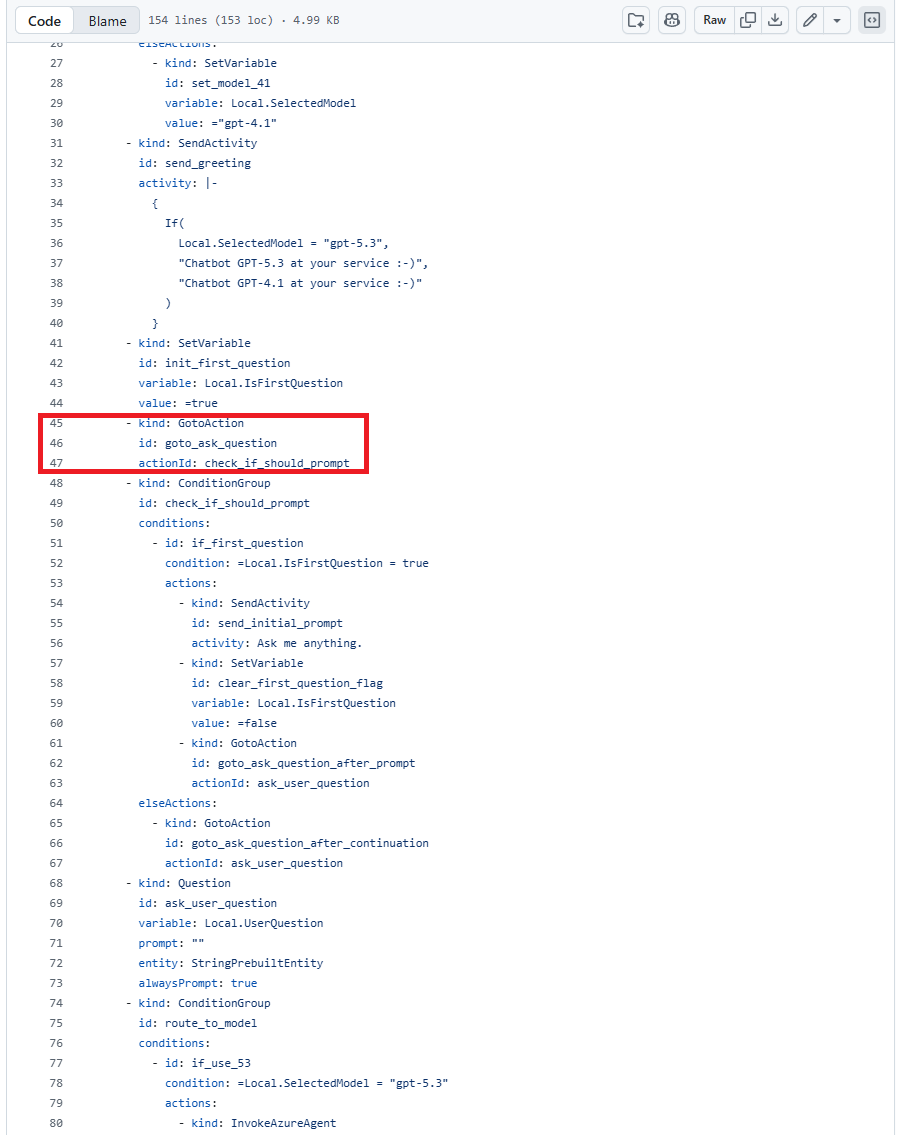

The workflow now uses GotoAction to jump to the main conversation handler:

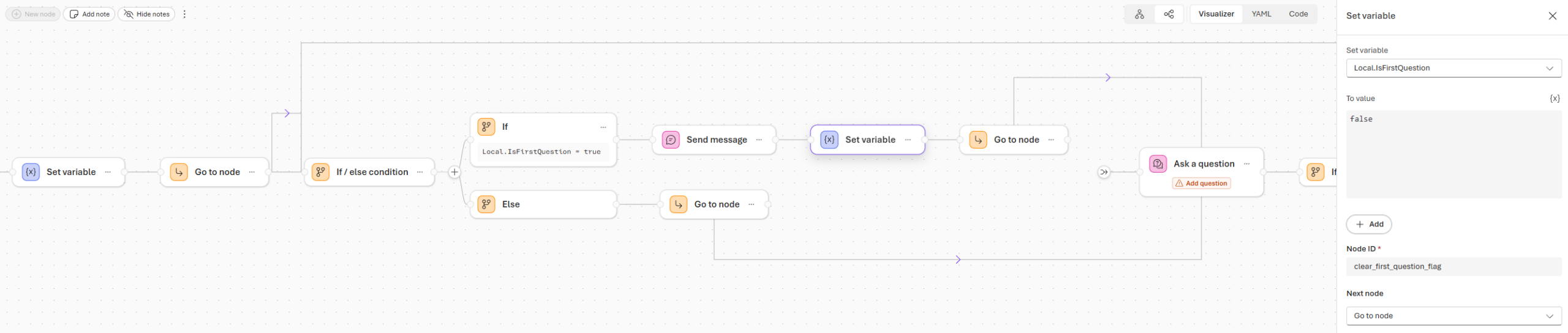

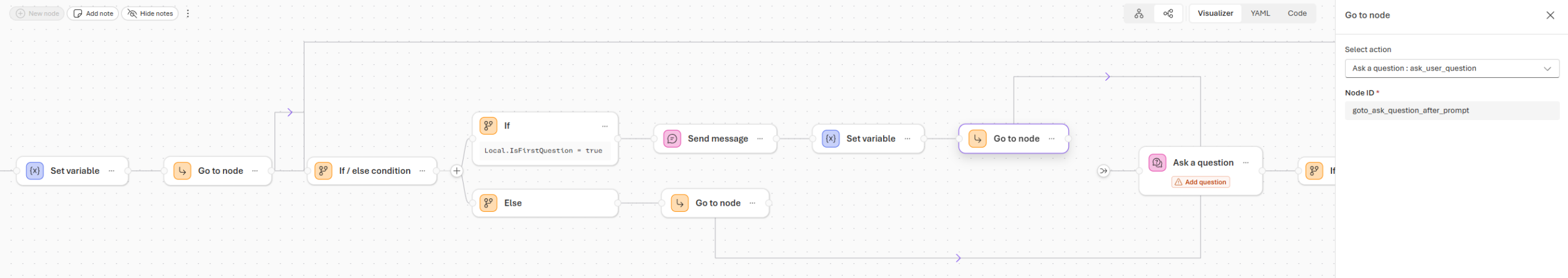

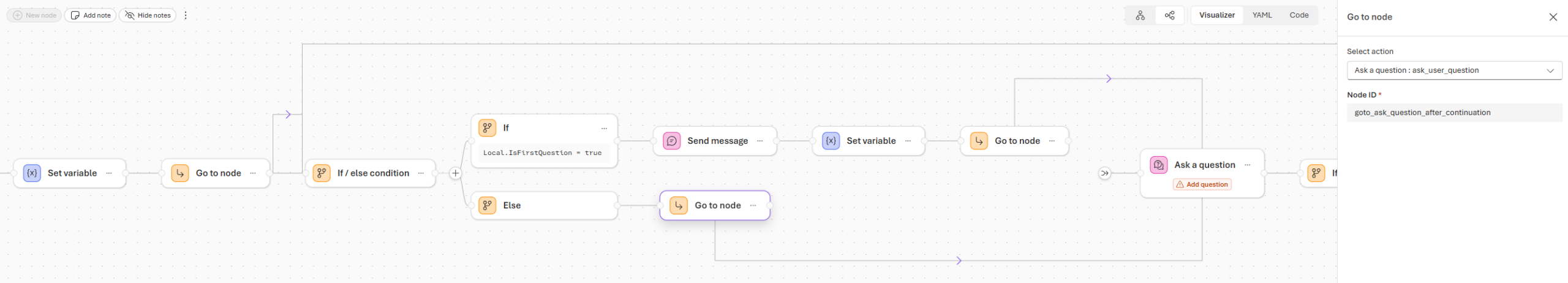

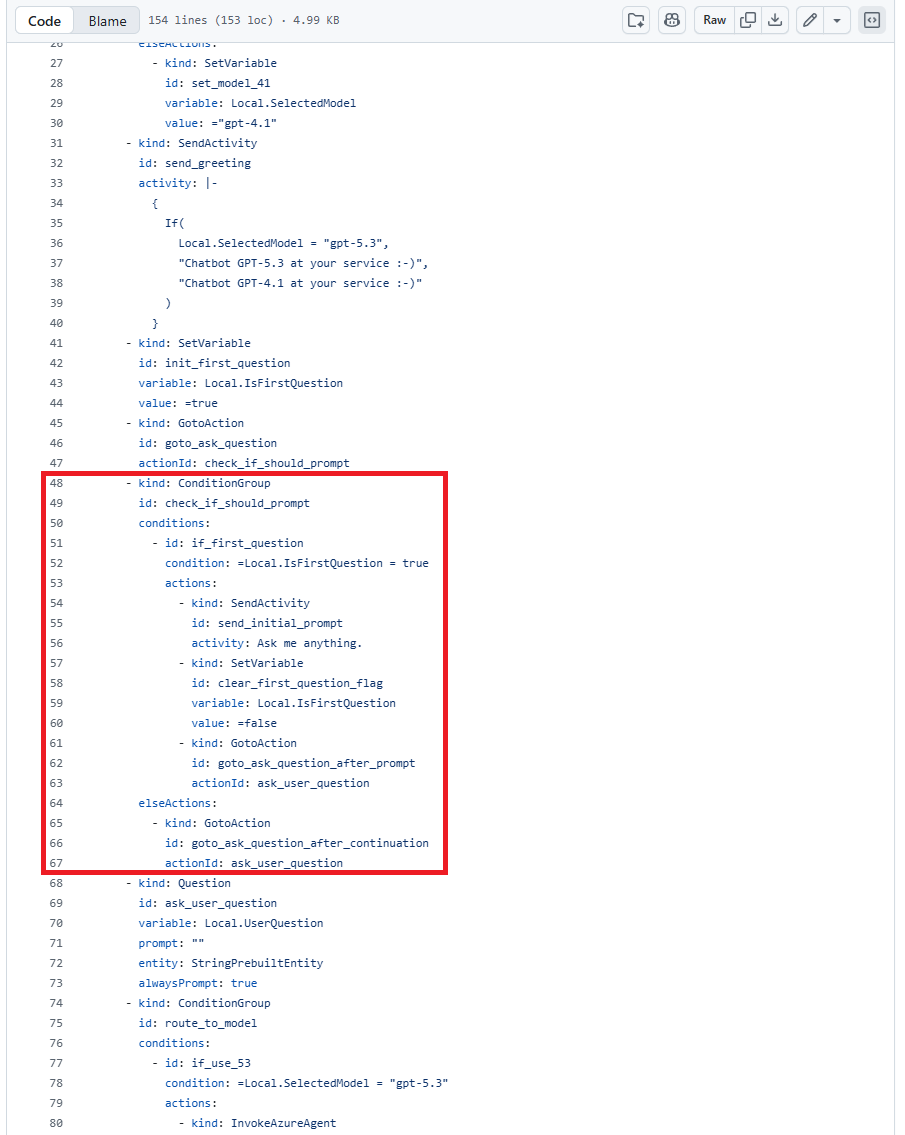

Step 7: Check if this is the first question

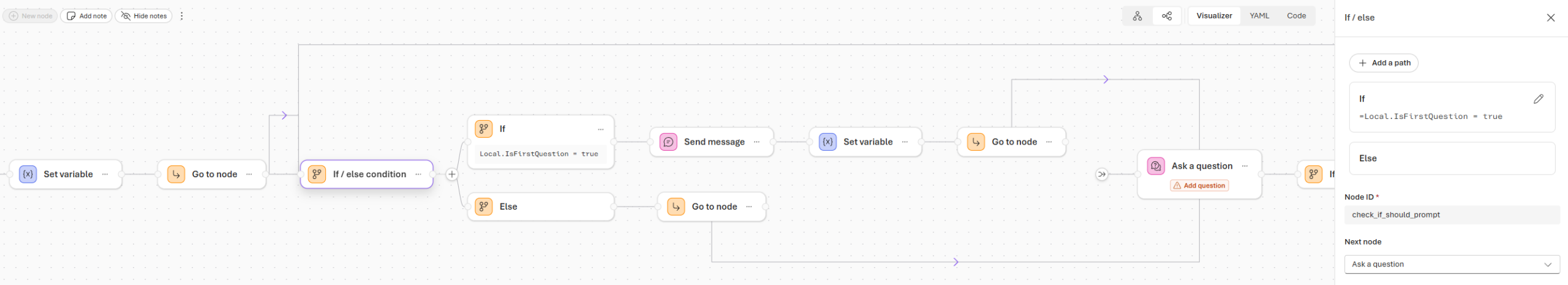

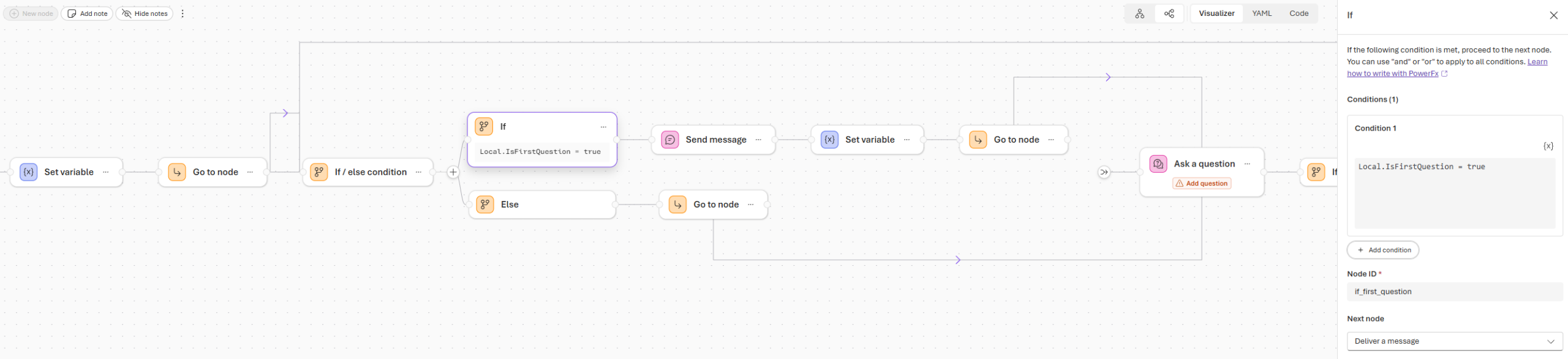

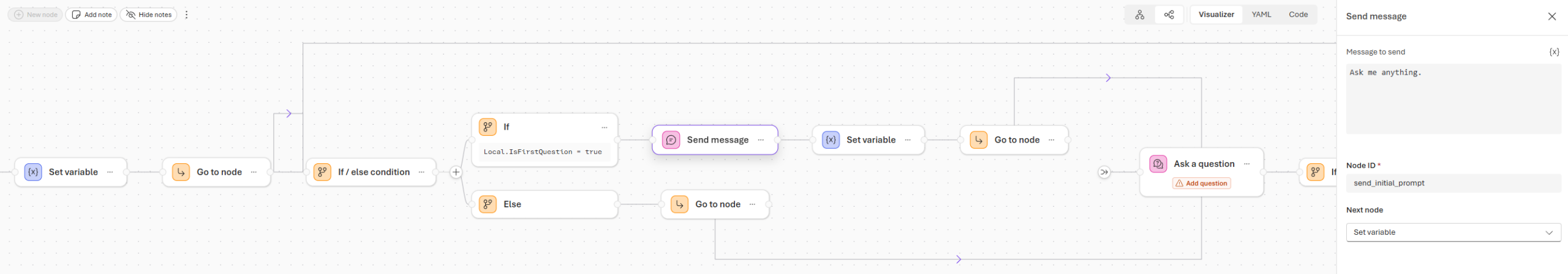

This condition group handles the branching logic for first versus subsequent questions:

Now if it wasn’t the first question then go straight to asking a question:

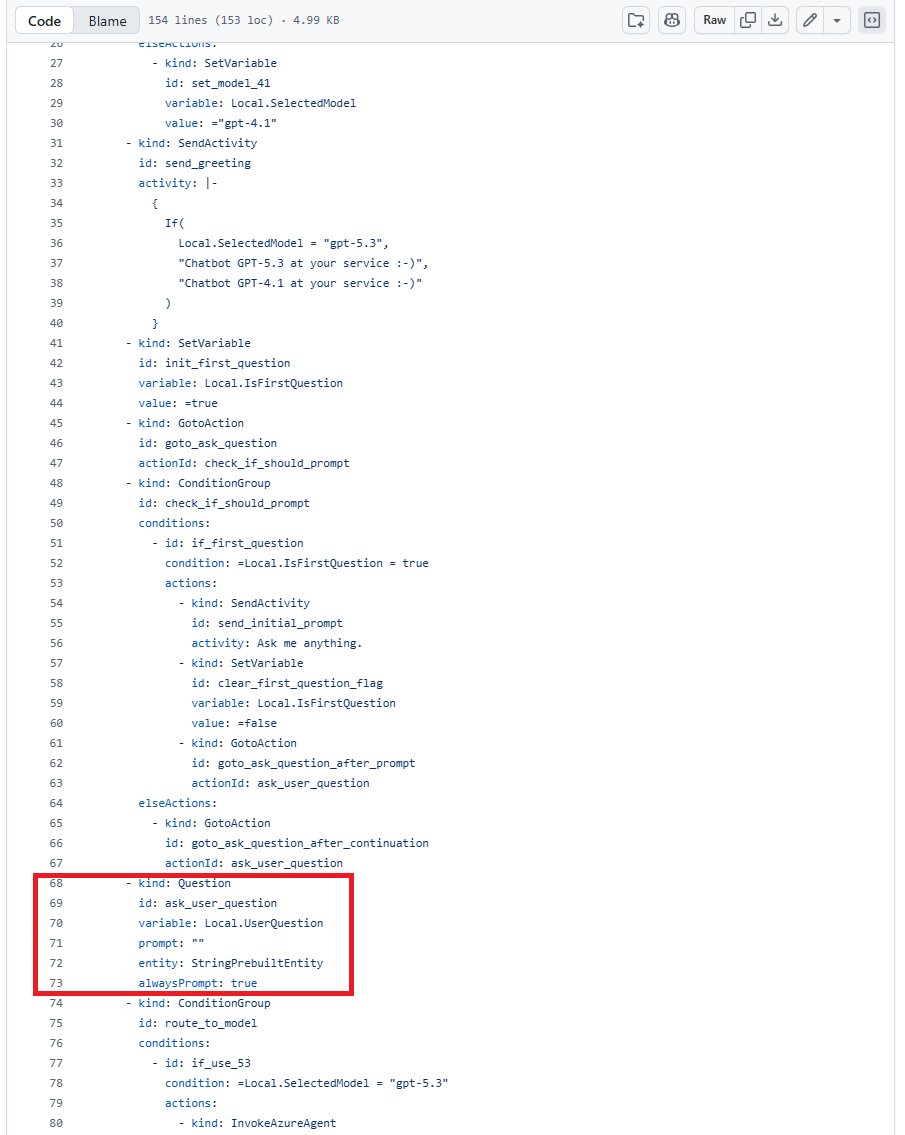

Step 8: Capture the user’s questions

The workflow now waits for user input (note that there is no text in the Question text box as there is no need to add output):

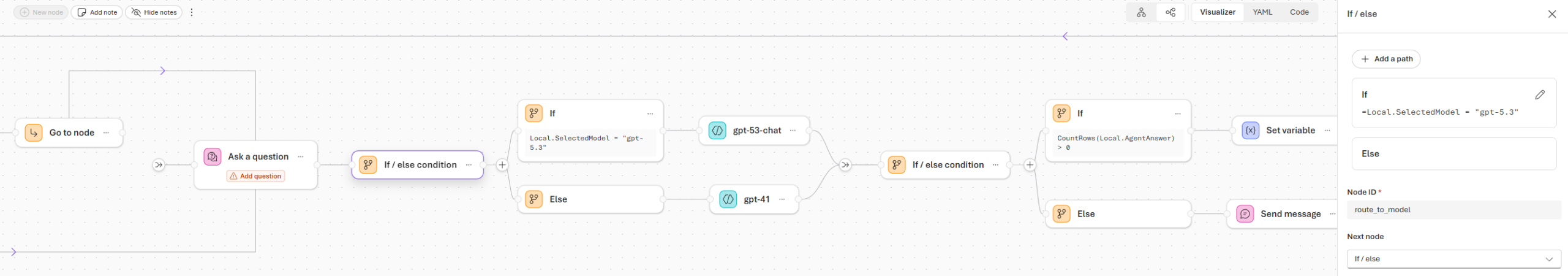

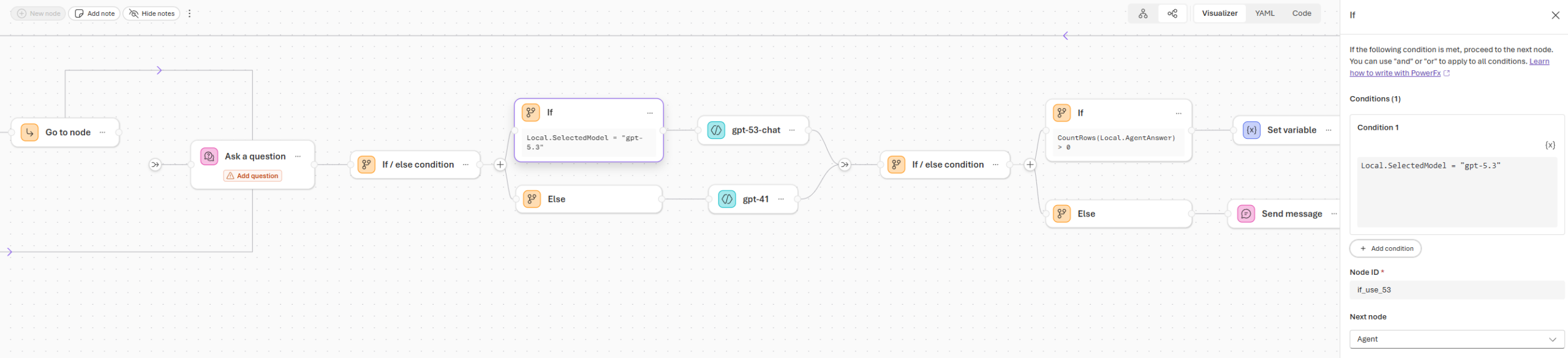

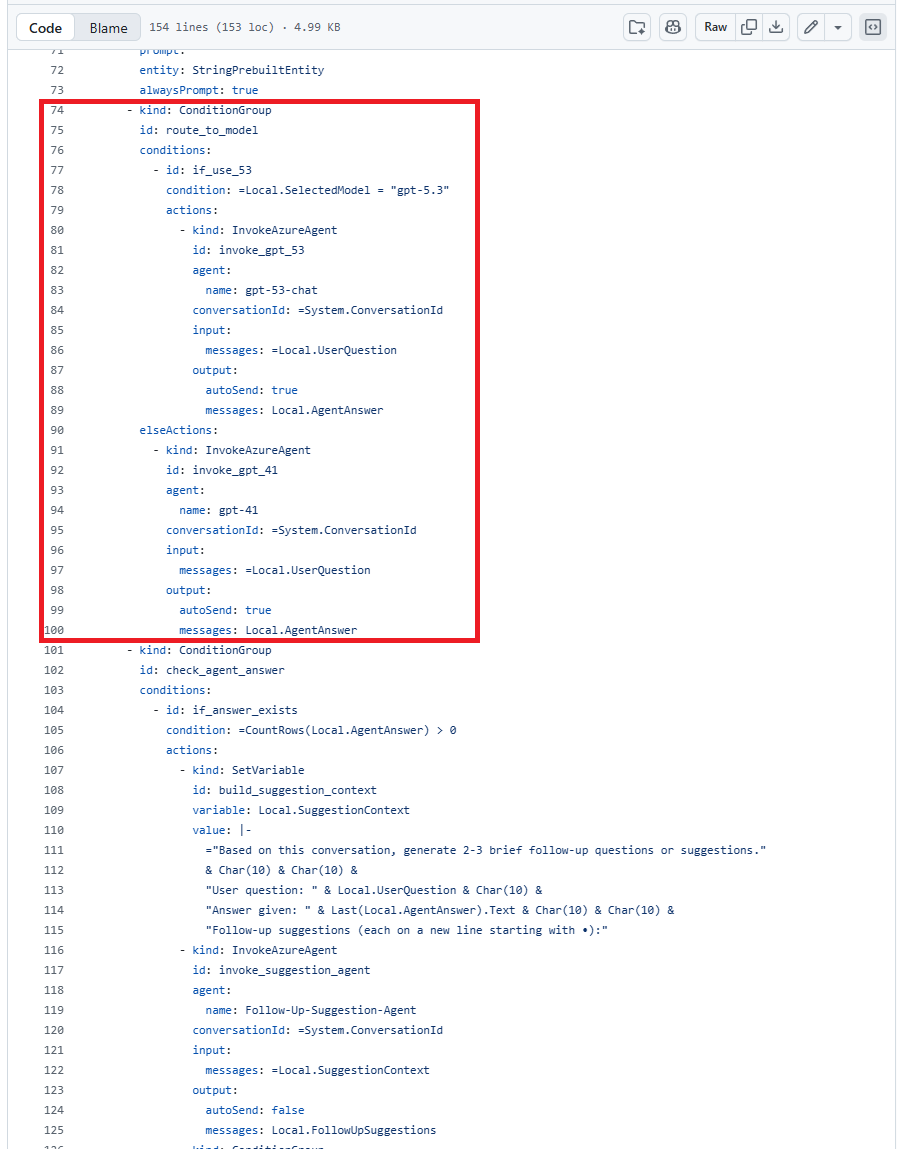

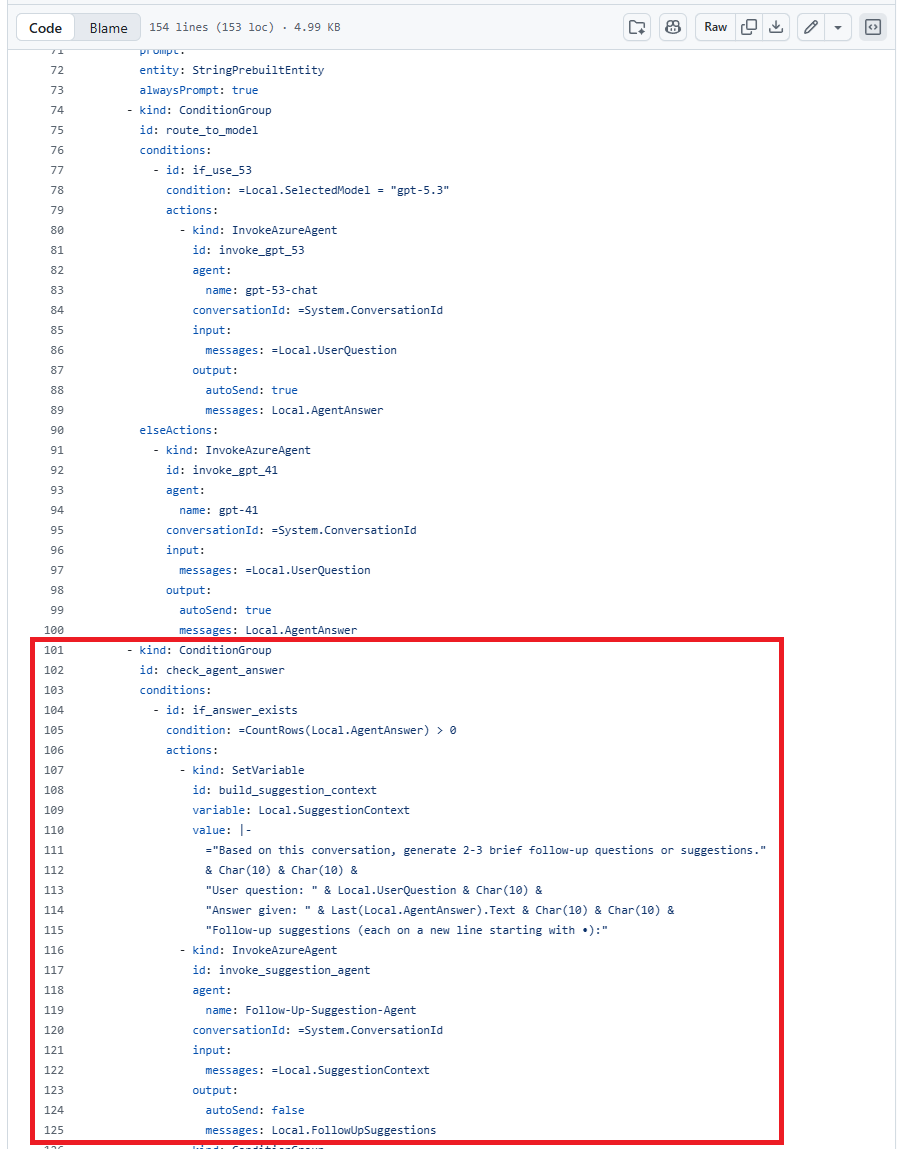

Step 9: Route to the selected LLM model

The question will be routed to the appropriate agent based on the earlier LLM selection:

Note: The conversationId: =System.ConversationId parameter is what enables context preservation. By passing the same conversation ID to the agent on each invocation, the underlying LLM maintains the full conversation history. This is why the model will understand what to do if the first question asked is “what was 1+1?”, then a follow up with “add 2 to it”.

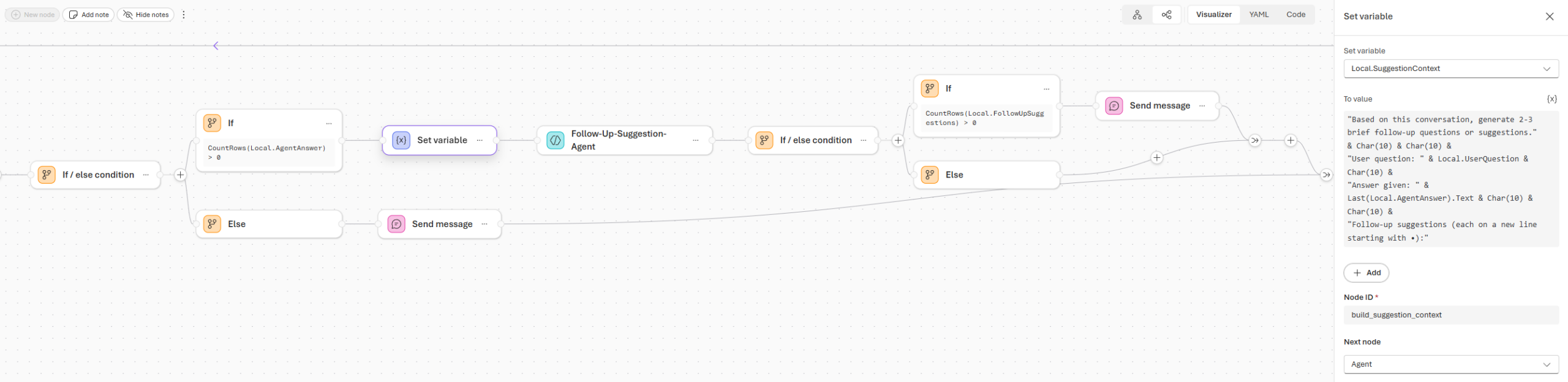

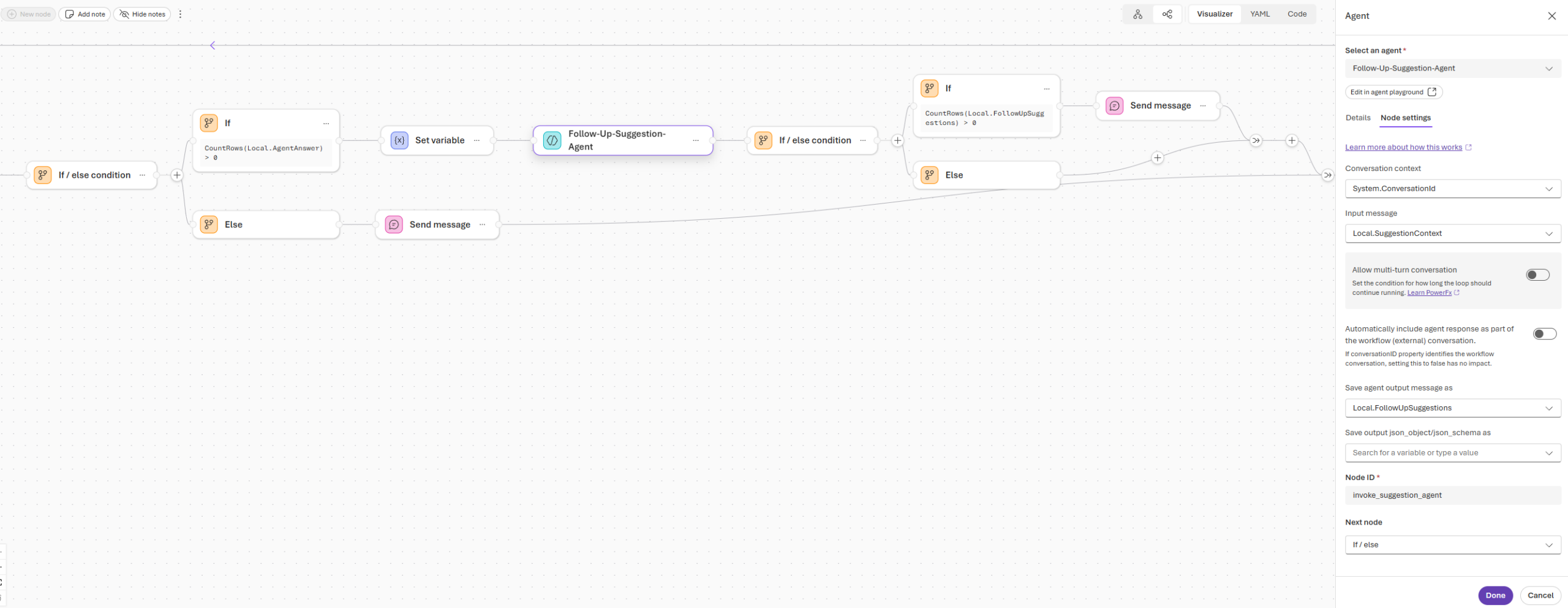

Step 10: Generate Follow-Up Suggestions

Once an answer is generated and sent to the user, we will set the variable named LocalSuggestedContext with instructions for the agent, then use it to invoke the agent that is responsible for generating meaningful suggestions:

The Char(10) which indicates a line break is self explanatory.

The Last() function used with Last(Local.AgentAnswer) is to handle scenarios where agent streams responses or sends multiple messages and can possibly send partial or intermediate responses to the suggestion agent. Using this function allows us to receive the complete answer.

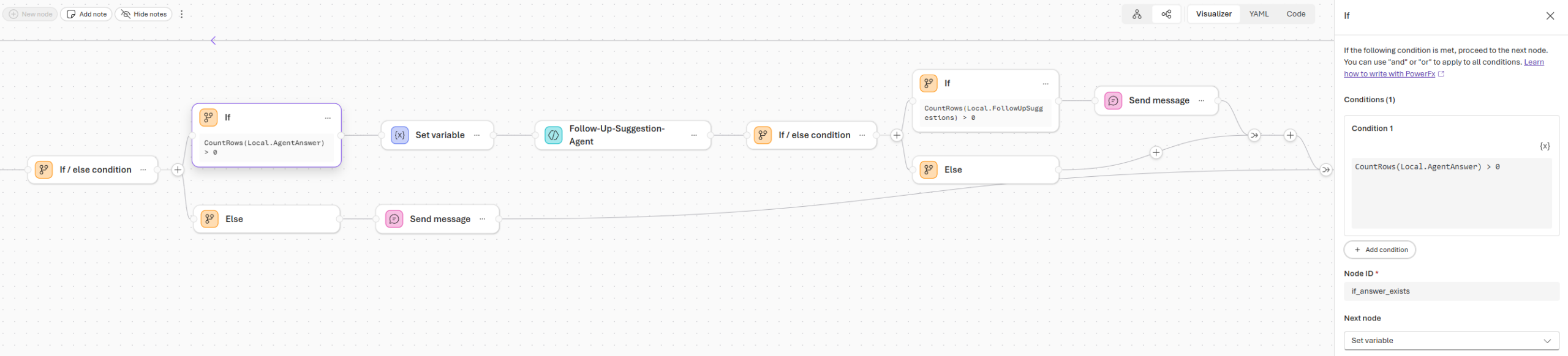

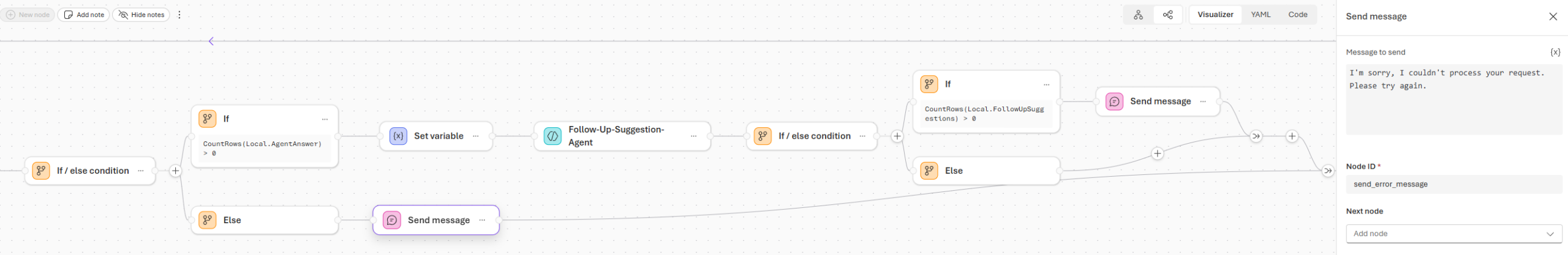

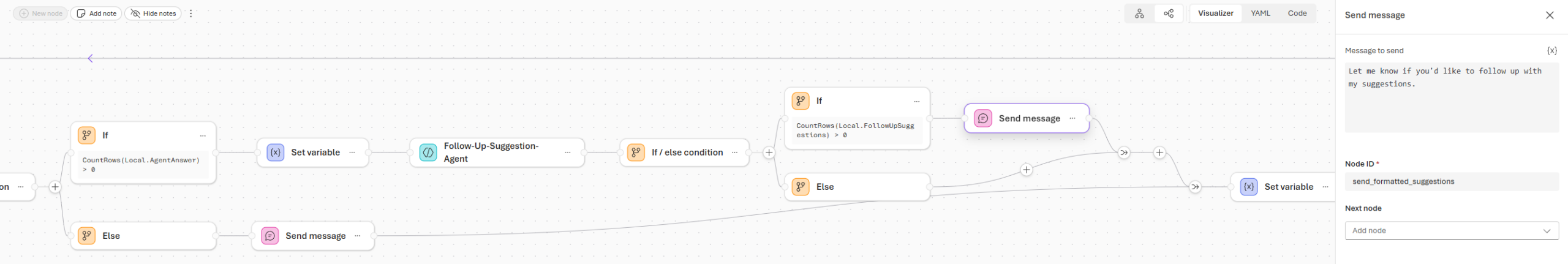

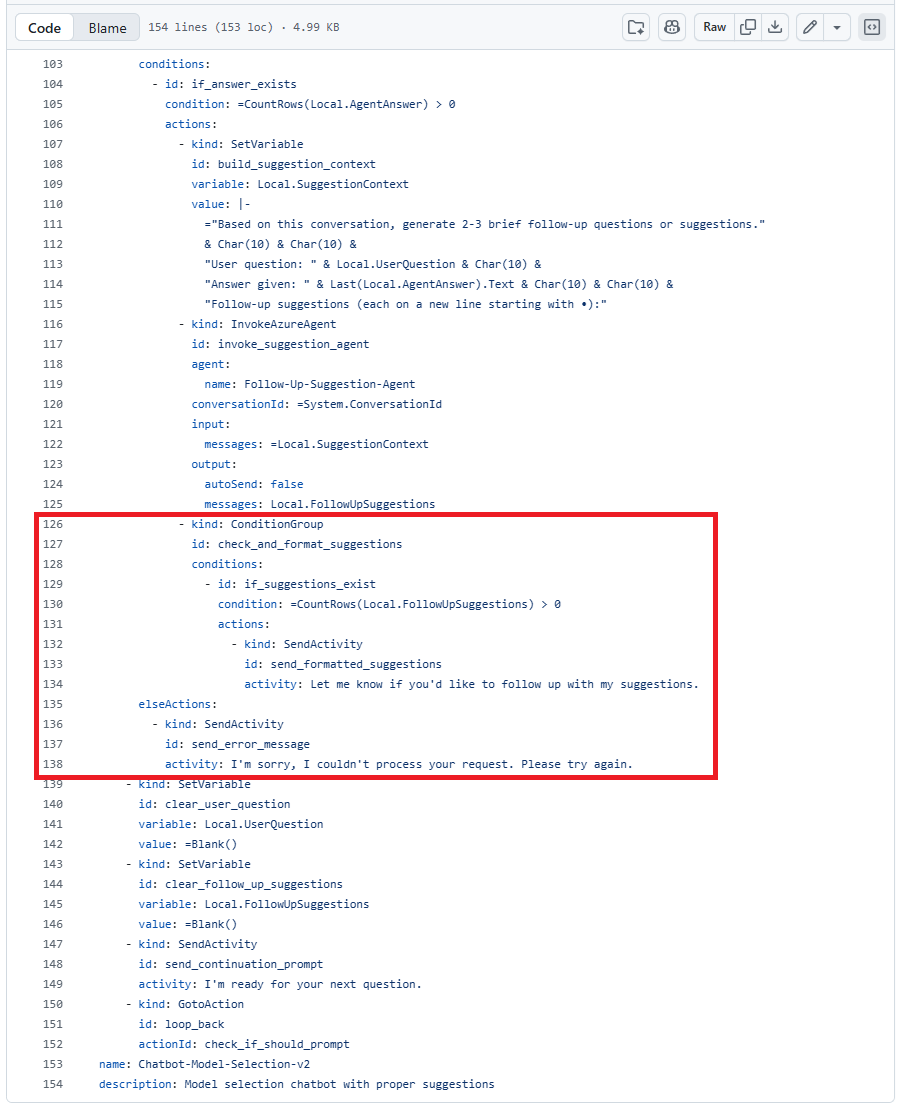

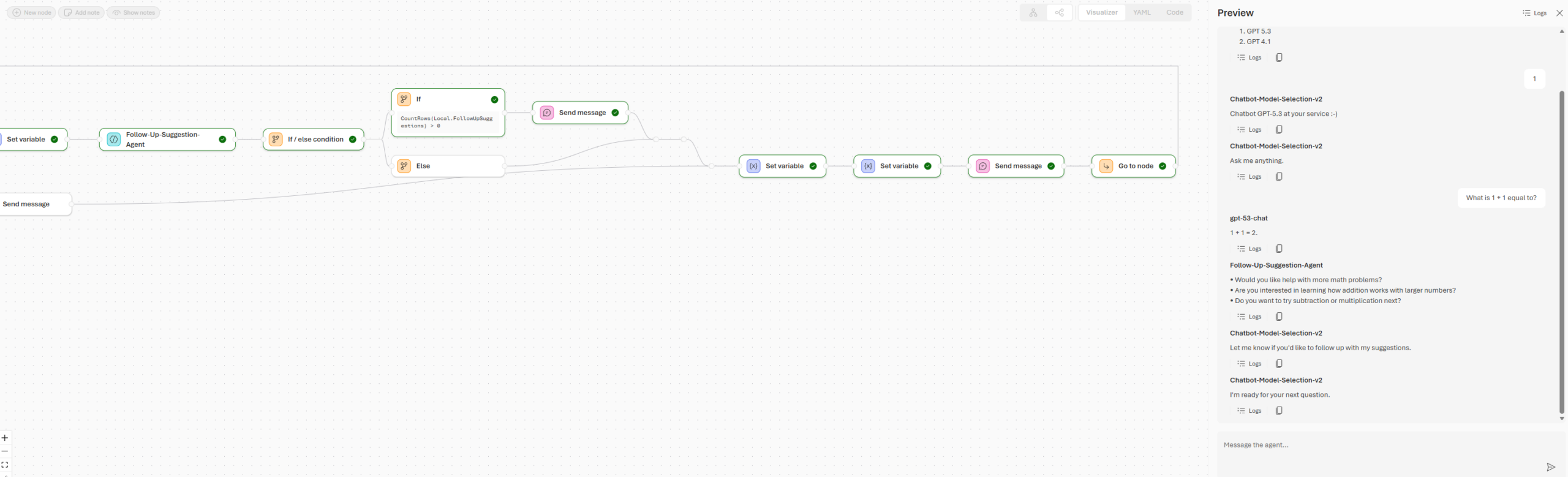

Step 11: Format and display the suggestions

You might be wondering why there is also an If/Else condition that counts rows:

The reason for incorporating this is because agents can sometimes fail to respond due to timeouts, errors, or other reasons and by using CountRows() > 0 to determine whether suggestions were generated, we can gracefully handle failures and show a friendly error message instead of having the workflow crash or behave unpredictably. If the generation of suggestions has failed then a: “I’m sorry, I couldn’t process your request. Please try again.” is presented.

If the generation of suggestions was successful then a: “Let me know if you’d like to follow up with my suggestions.” is presented to the user.

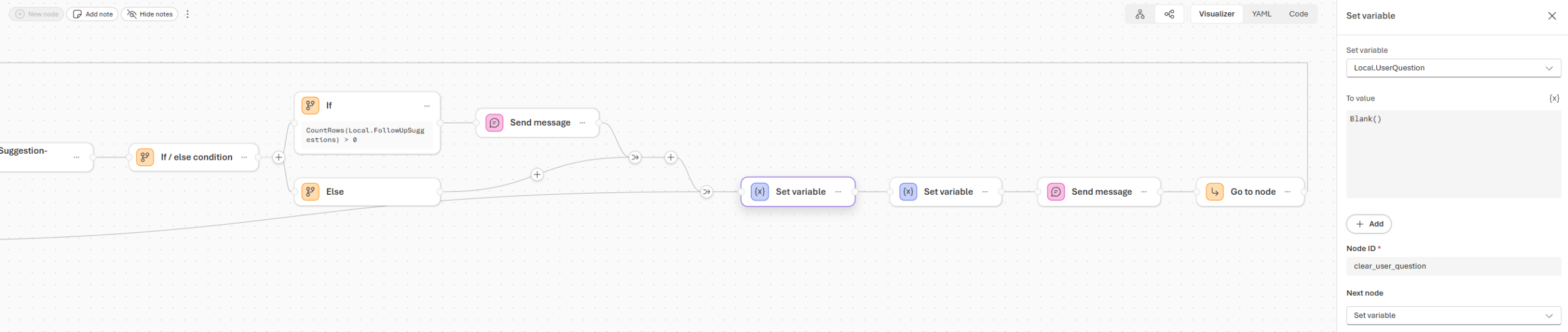

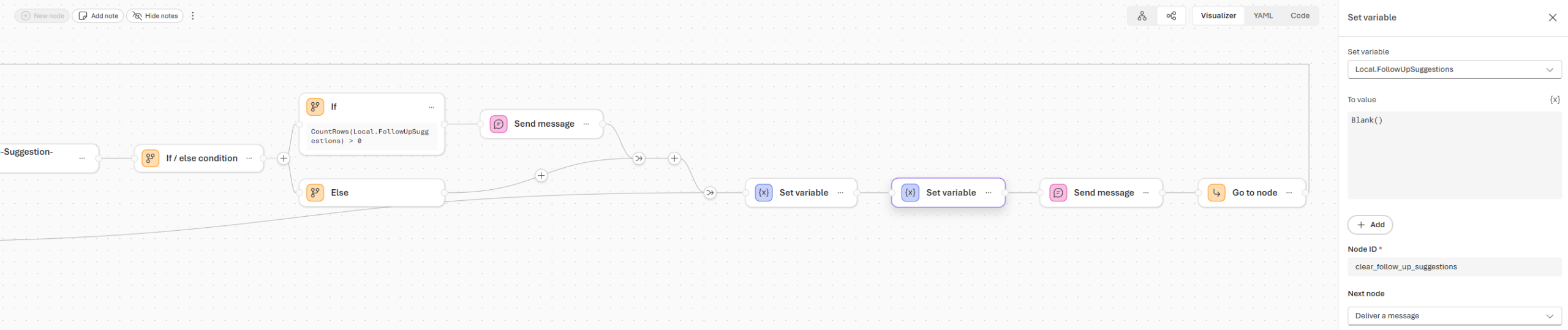

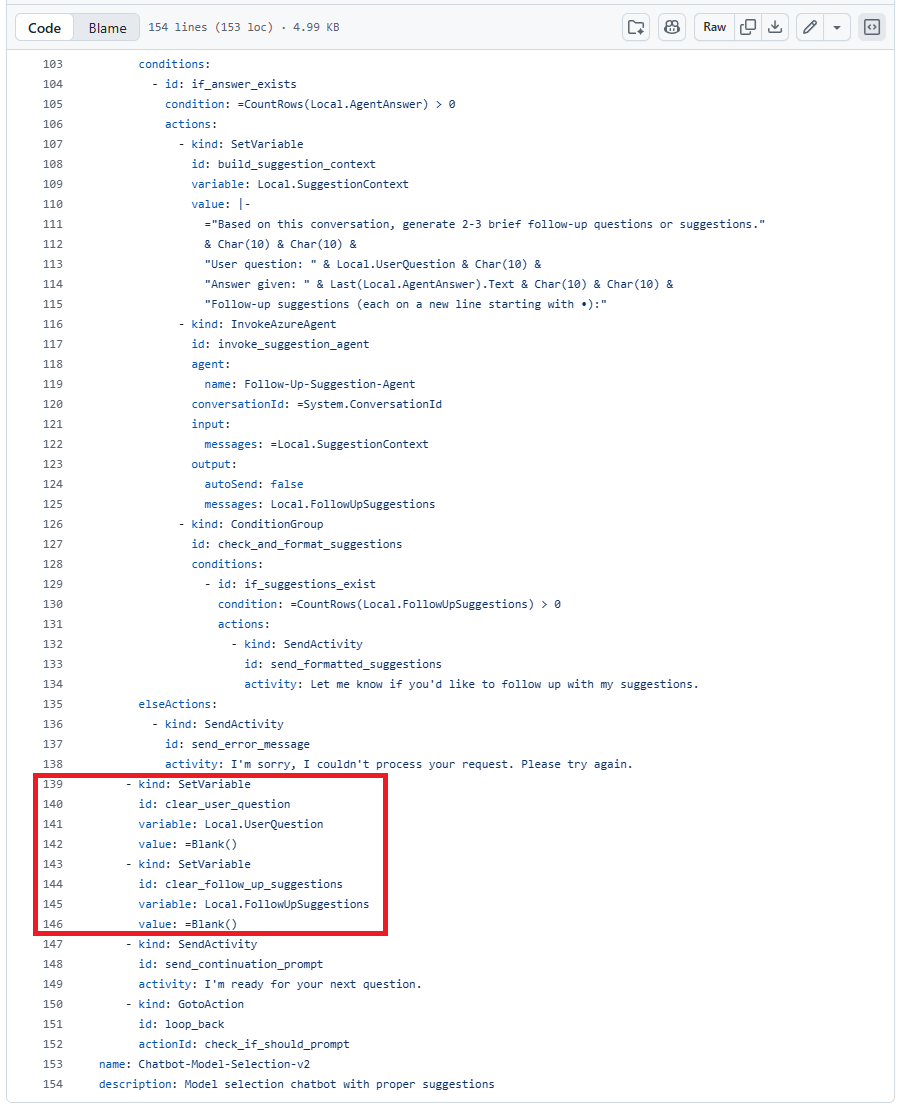

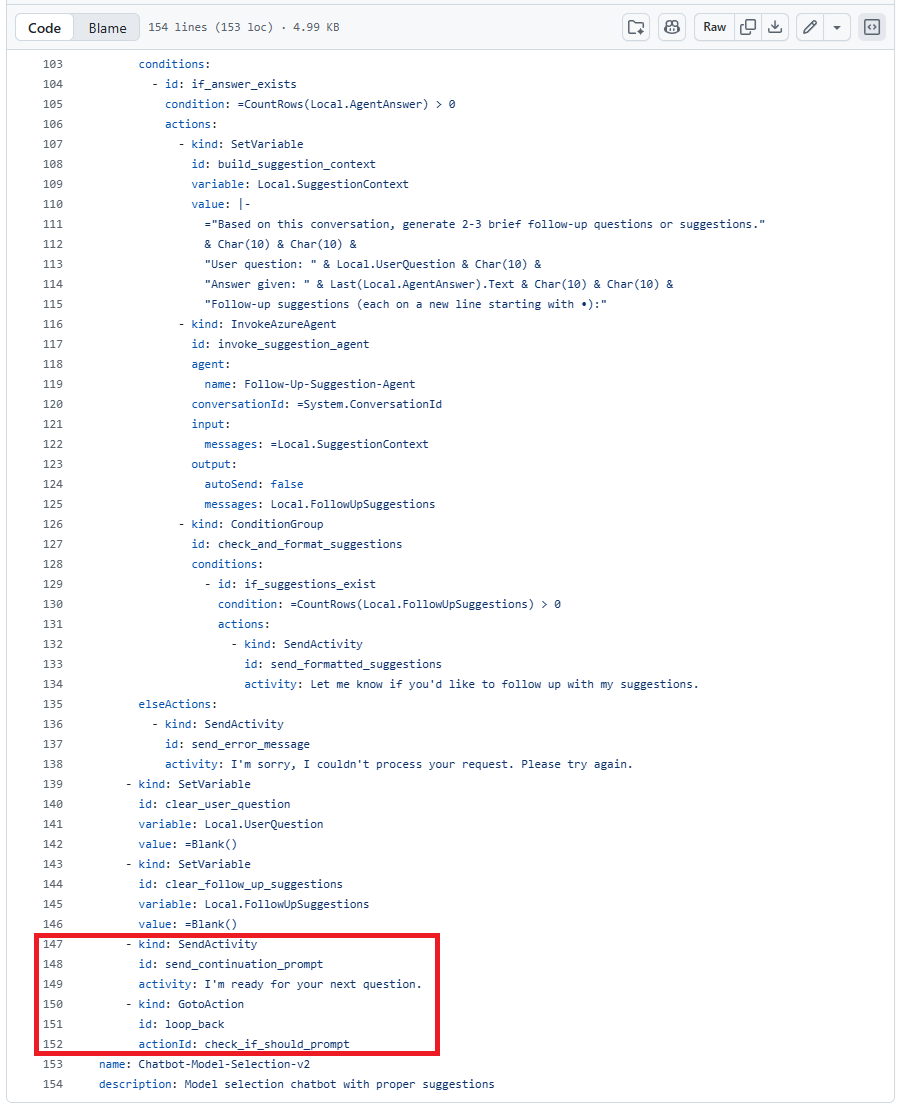

Step 12: Clean up variables for the next iteration run

The desired interaction with the user has now now completed and before reiterating the loop, we’ll need to clean up the variables with the Power FX Blank() before returning to the start. We want to clear the Local.UserQuestions value to prevent scenarios where the next run’s agent fails to process the question and answer, then enters the next loop with stale data that was never processed:

We want to ensure that the values stored in CountRows(Local.FollowUpSuggestions) is cleared for scenarios where the next run’s agent fails to generate suggestions but the previous stored data makes it look like suggestions were successfully created.

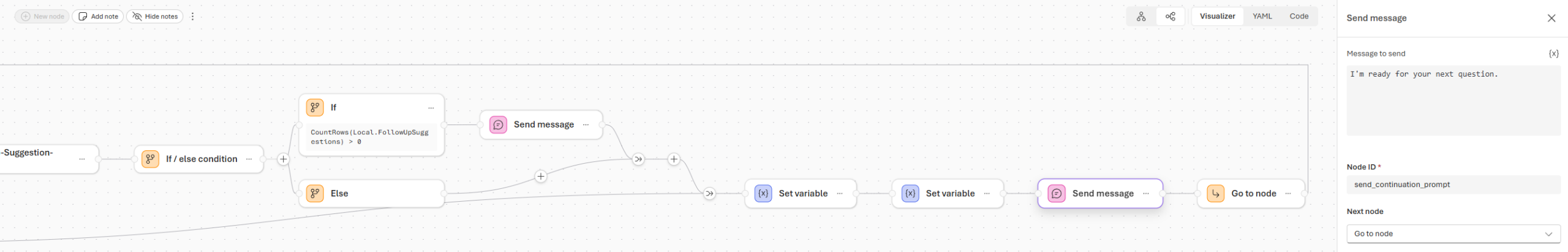

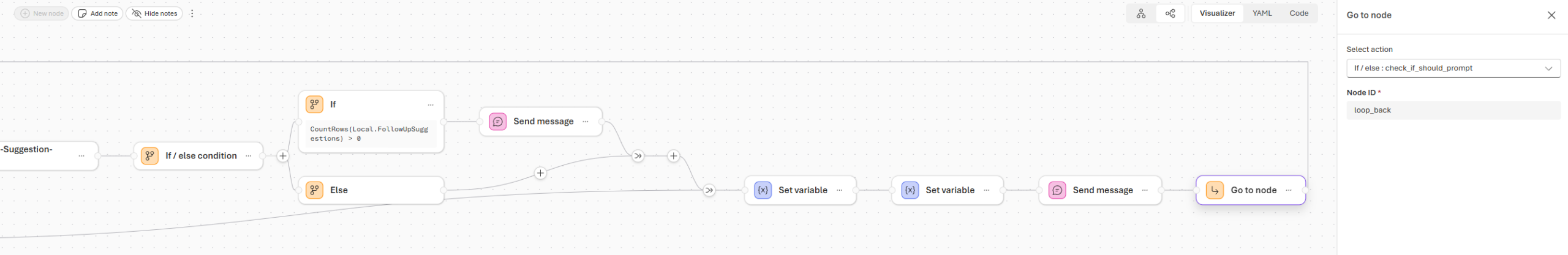

Step 13: Prompt for next question and loop

We are now at the end of the loop where we notify the user that we are ready for the next questions, then bring the user back the end of the LLM model selection (we’re not going to ask them to select the model again) and the beginning of asking a question:

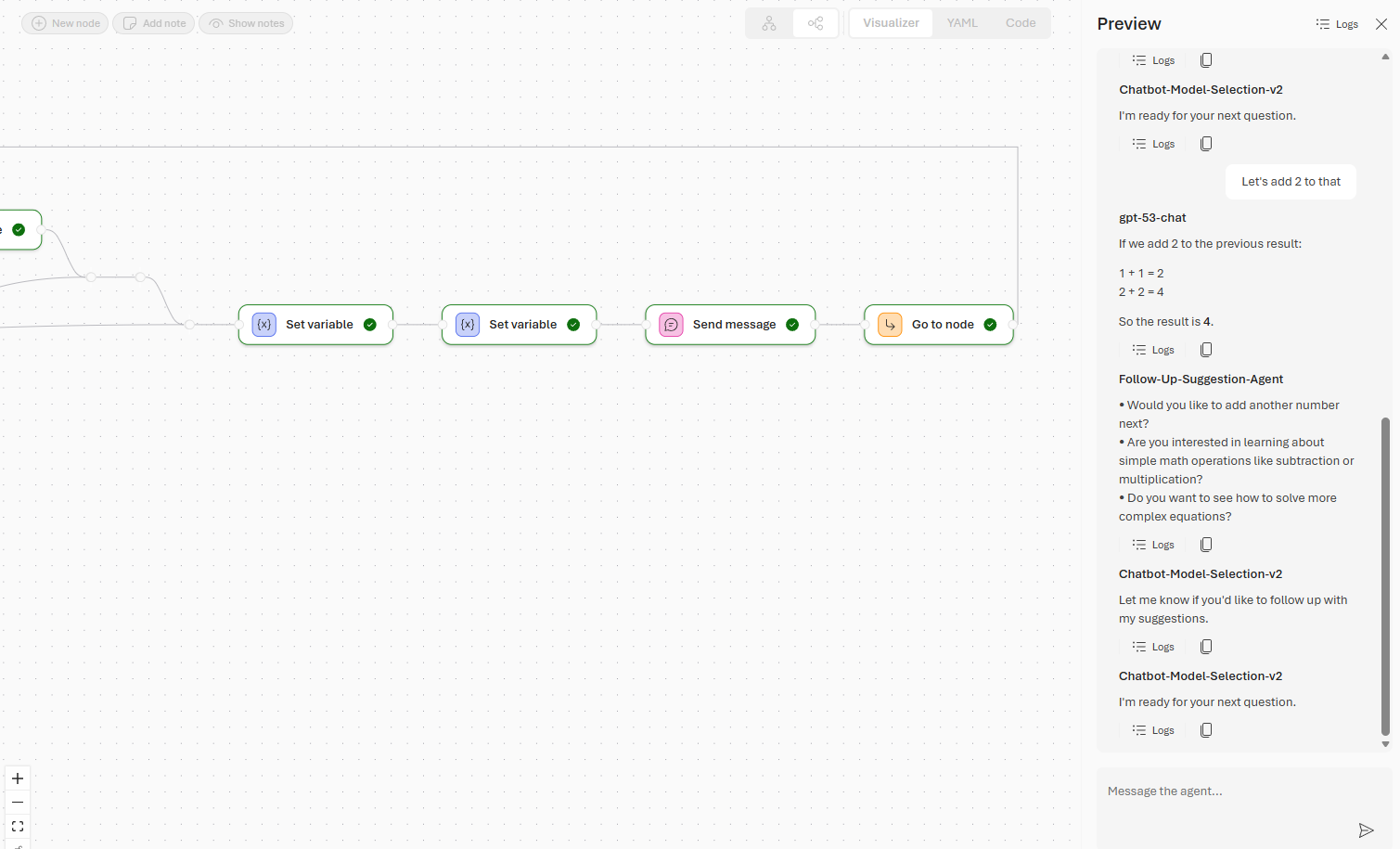

Understanding Context Preservation

I want to take a bit of time to explain how this workflow maintains conversation context across multiple the iterative loops. Let’s use the following conversation as an example:

Iteration 1:

User: “What is 1 + 1 equal to?”

Agent: “1 + 1 = 2.”

Iteration 2:

User: “Now add 2 to that.”

Agent: “Adding 2 to the previous result (2) gives us 4.”

So how does this work?

When we invoke the agent with conversationId: =System.ConversationId, we’re telling AI Foundry to use the same conversation session. The underlying model receives the full message history, not just the current question. This happens automatically and we do not need to manually concatenate history.

The System.ConversationId is a built-in variable that uniquely identifies the current conversation session. By consistently passing this ID, we ensure the agent has access to the complete conversation context.

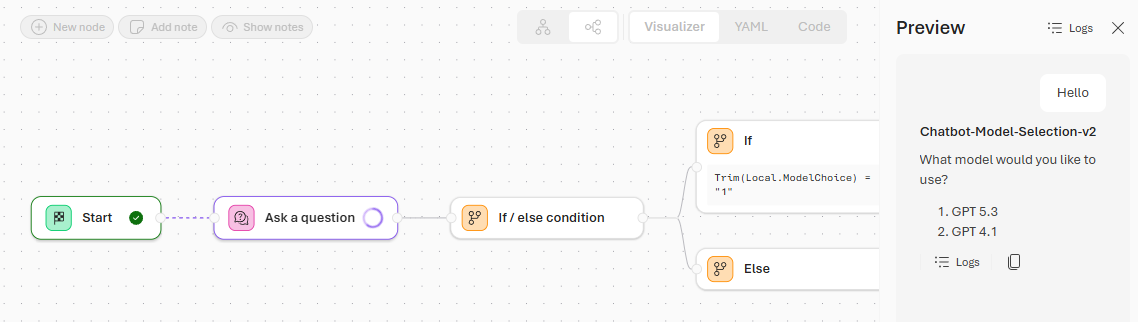

Workflow Demo

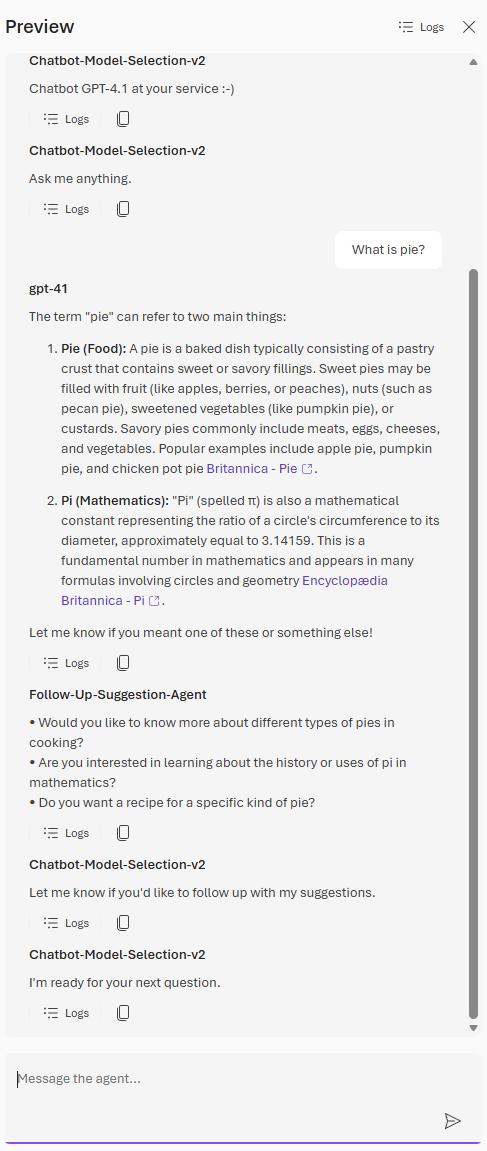

Demo #1

Let me now provide a demo run to show the workflow execution:

2nd run with previous loop’s knowledge:

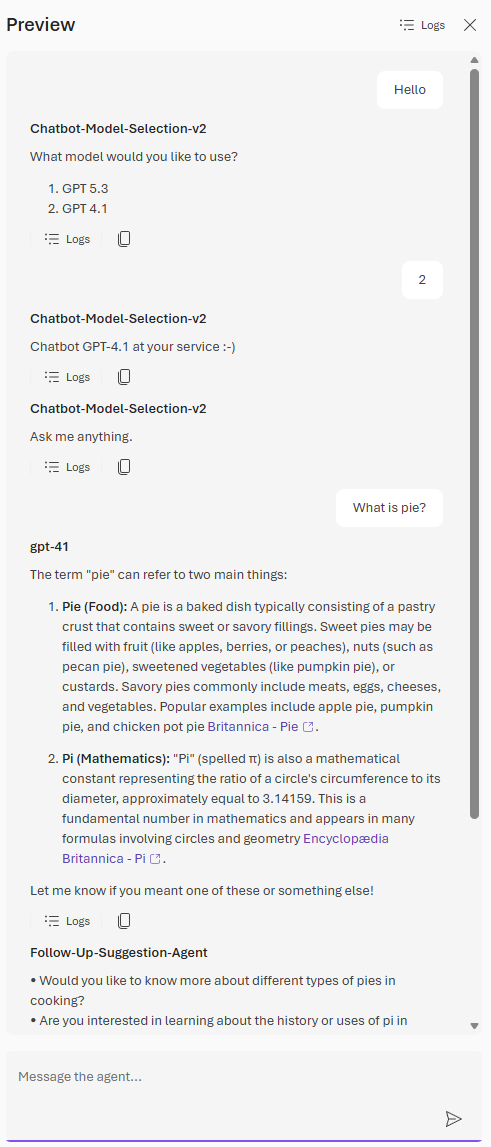

Demo #2

Let’s try another question:

Iteration 1:

User: “What is Pie?”

Agent: “The term “pie” can refer to two main things:

- Pie (Food): A pie is a baked dish typically consisting of a pastry crust that contains sweet or savory fillings. Sweet pies may be filled with fruit (like apples, berries, or peaches), nuts (such as pecan pie), sweetened vegetables (like pumpkin pie), or custards. Savory pies commonly include meats, eggs, cheeses, and vegetables. Popular examples include apple pie, pumpkin pie, and chicken pot pie Britannica – Pie .

- Pi (Mathematics): “Pi” (spelled π) is also a mathematical constant representing the ratio of a circle’s circumference to its diameter, approximately equal to 3.14159. This is a fundamental number in mathematics and appears in many formulas involving circles and geometry Encyclopædia Britannica – Pi .

Let me know if you meant one of these or something else!”

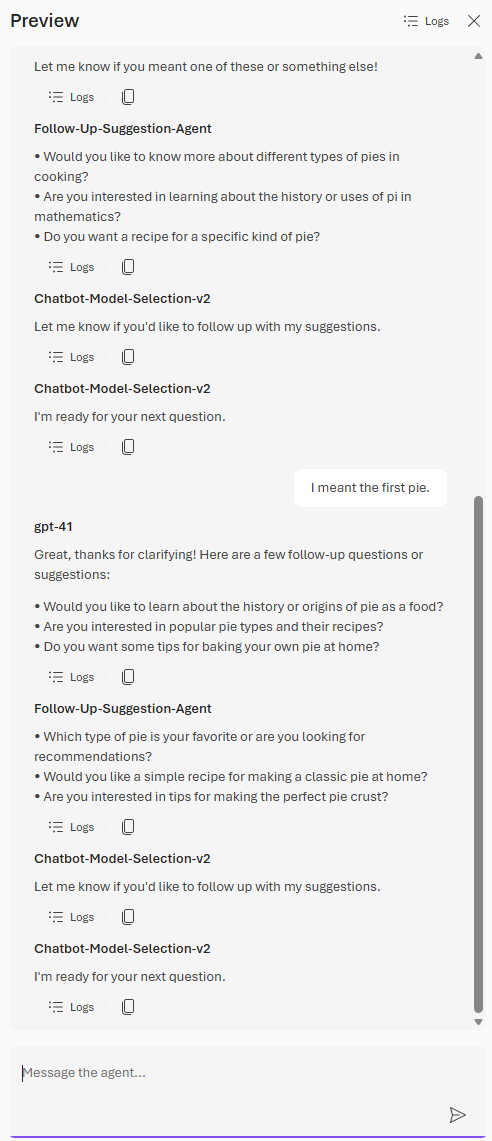

Iteration 2:

User: “I meant the first pie.”

Agent: “Great, thanks for clarifying! Here are a few follow-up questions or suggestions:

• Would you like to learn about the history or origins of pie as a food?

• Are you interested in popular pie types and their recipes?

• Do you want some tips for baking your own pie at home?”

… and there we have it. The opportunities to expand the workflow or build a new one for other use cases is limitless and I hope this provides anyone looking for a demonstration a taste of the possibilities with AI Foundry.